•14 min read

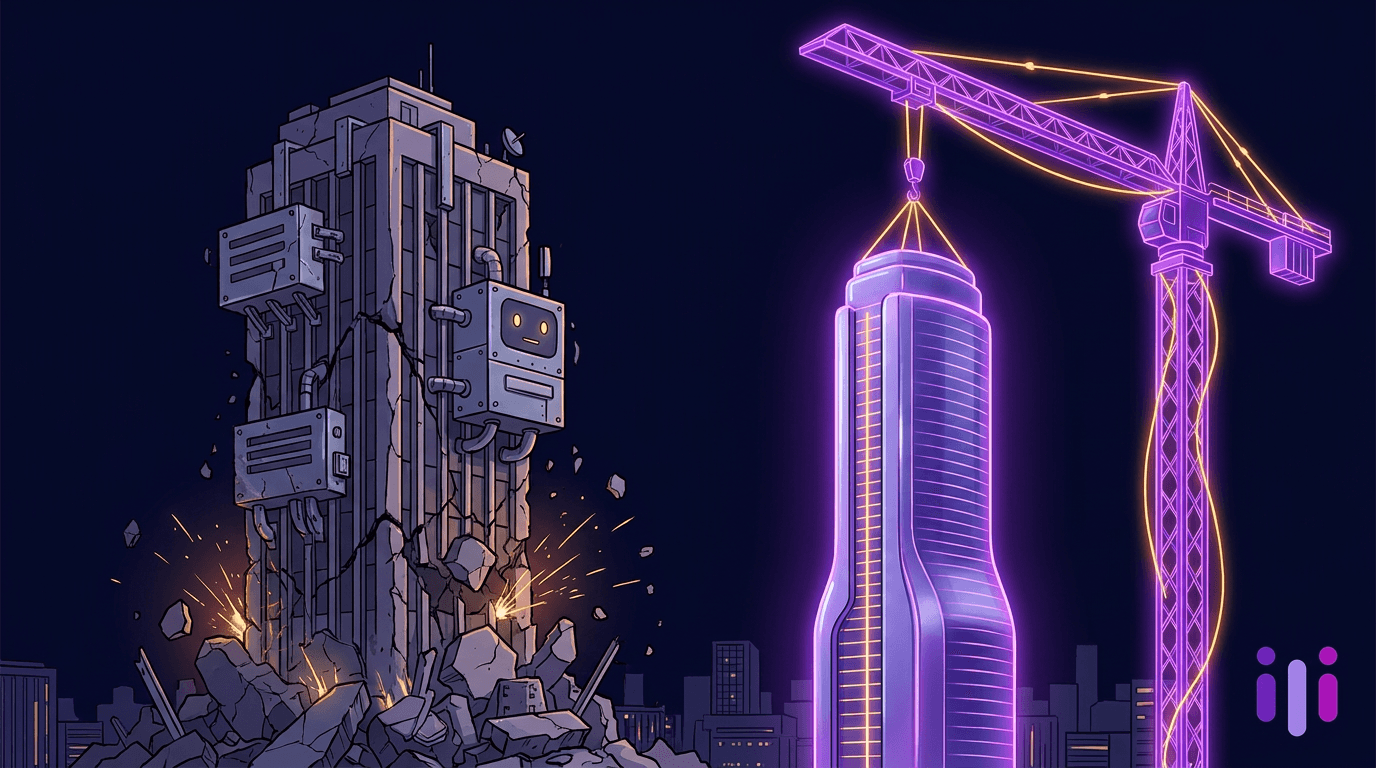

AI-Native Customer Engagement: Why the Engagement Stack Needs to Be Rebuilt, Not Bolted On

TL;DR

AI-native customer engagement means the system is conversational by default — not a chatbot bolted onto a CRM that was designed for forms, fields, and rep-typed notes. Most products marketed today as "AI customer engagement" are exactly that bolt-on: Salesforce Einstein, HubSpot Breeze, Zendesk AI agents, Intercom Fin, and the long tail of vendor-attached copilots. They make legacy stacks faster, but they keep the underlying schema — contact records, ticket forms, opportunity stages — as the source of truth, with AI bolted on as a thin language layer. AI-native engagement inverts the architecture: every interaction begins as a conversation, the conversation is the primary record, and structured fields are derived from what the customer actually said. This piece argues the engagement stack needs to be rebuilt, not retrofitted, and lays out the migration path from CRM-centric to conversation-centric — including which pieces survive, which get deprecated, and what teams should pilot in the next 90 days. The cost of staying bolt-on is not slower software; it's a permanent ceiling on how much customer truth you can capture.

Bolt-on AI vs AI-native: the architectural difference

The architectural difference between bolt-on AI and AI-native engagement is where the customer's words live. In a bolt-on system, the customer's words are a temporary input that gets compressed into a CRM field, a ticket category, or a survey score — the words are the byproduct, the structured row is the artifact. In an AI-native system, the conversation itself is the artifact; the structured row is derived, queried, and re-derivable from the underlying transcript whenever the question changes.

This sounds abstract until you trace what happens to a single customer message in each architecture. In a bolt-on stack, a customer types "I'm thinking about churning because the new pricing tier moved the SSO feature out of reach for our team." A rep, agent, or AI summarizer reads it and does one of three things: (1) creates a churn-risk flag in the CRM, (2) routes the ticket to retention, or (3) writes a one-line note in the account record. The original sentence — with its causality, its emotional charge, the specific feature called out, the team-size context — is gone in a week. The CRM remembers a flag and a note. It does not remember the sentence.

In an AI-native architecture, that sentence is the durable record. Every downstream system — churn prediction, NPS context, product feedback, win-loss analysis, account-health scoring — pulls from the conversational corpus. Six months later, when a PM asks "how often do customers cite SSO as a churn driver," the answer is a query against actual sentences, not a count of CRM tags some rep remembered to set.

This is the same shift that happened in analytics a decade ago. We stopped pre-aggregating into rigid OLAP cubes and moved to event-level data warehouses where you could re-ask any question. Engagement is going through the same migration — and the conversational data collection method is what makes it possible.

Why bolt-on engagement plateaus

Bolt-on engagement plateaus because the AI is constrained by the schema underneath it, and the schema was designed for an era of human reps typing into rectangles. Every "AI agent for sales" or "AI for service" product on the market today inherits the limits of the table it was wired into.

There are five specific ceilings bolt-on architectures hit:

- Field-shaped questions. When the storage layer is a CRM contact record, the AI is incentivized to ask field-shaped questions: name, company, employee count, industry. The customer's story gets compressed into the questions the schema can store, not the questions that would actually surface intent. This is the same trap that makes forms fail at intake.

- Lossy summarization. Bolt-on AI summarizes the conversation into a CRM note or a ticket category. The summary is what gets retained; the raw conversation is treated as throwaway. Every downstream report is built on the summary, which means the report is one lossy step away from reality.

- No re-querying. Because raw conversations aren't preserved as the primary record, you cannot ask new questions of old interactions. The day a new churn driver emerges, you cannot retroactively scan last quarter's calls for early signals.

- Channel fragmentation. Bolt-on engagement is bolted onto each channel separately — chatbot for web, IVR for phone, ticket form for support, survey for post-sale. The customer's voice is split across five summaries in five tools, none of which talk to each other in raw form.

- Rep-mediated learning loops. Bolt-on systems require a human rep to translate the customer into the system. The AI helps the rep type faster, but the AI doesn't learn from the customer directly. According to Gartner's 2024 customer service research, 58% of CS leaders have grown their teams — adding rep-mediated capacity rather than removing the rep as a bottleneck.

The plateau shows up in metrics that look fine quarter-over-quarter and then stop improving. NPS goes from 32 to 38 and stalls. Ticket deflection goes from 22% to 31% and stalls. Survey response rates collapse from 18% to 6% over three years. Each plateau is the schema fighting back.

What AI-native engagement looks like end-to-end

AI-native customer engagement looks like a continuous conversational layer that touches the customer at every moment — pre-sale intent capture, onboarding, expansion conversations, churn-risk interviews, post-incident debriefs, and renewal discovery — and writes everything back to a single conversational corpus. There is no separate "form for that," no separate "survey for this," and no separate "chatbot for the website." There is one engagement substrate, and structured fields are derived views.

End-to-end, an AI-native engagement system has six components that replace what the legacy stack does in pieces:

- Conversational intake replaces lead forms and intake PDFs. Instead of a 12-field form, prospects have a 90-second intelligent intake conversation that captures intent, urgency, and constraint context.

- Conversational onboarding replaces canned tooltip tours. New customers are interviewed about what they're hiring the product to do, and the onboarding flow adapts to their answers — covered in our AI-native onboarding playbook.

- Continuous discovery interviews replace quarterly surveys. PMs and CS teams run continuous discovery interviews on a weekly cadence with the Perspective interviewer agent.

- Churn signal capture replaces NPS-only health scoring. Conversations with at-risk accounts surface the specific churn driver in the customer's words, not as a 1-10 score — see the conversational churn analysis approach and at-risk identification by signal.

- Win-loss and renewal interviews replace gut-feel deal reviews. Every closed deal — won or lost — is interviewed about why, and renewals open with a structured conversation about value realized.

- Voice of customer corpus replaces siloed feedback databases. Every conversation lives in one searchable, re-queryable voice of customer corpus, and structured dashboards are derived views over the corpus.

The pattern across all six is the same: the conversation is durable, the structure is derived. That inversion is the entire architectural argument.

A useful comparison frame:

Migration path: from CRM-centric to conversation-centric

The migration from CRM-centric to conversation-centric engagement does not require ripping out your CRM in week one. It requires inverting which system is the source of truth, then progressively deprecating bolt-on capture surfaces as the conversational corpus replaces them. Five phases, roughly 12 months end-to-end.

Phase 1 — Pick one high-leverage capture surface and replace it (weeks 1–4). Choose a single moment in the customer journey where forms are obviously lossy. The two highest-leverage starting points are inbound lead intake and post-purchase onboarding, because both currently rely on rectangles and both immediately benefit from an interviewer agent. Stand up a Perspective concierge agent on that surface and route the resulting transcripts into your CRM as enriched contact records. The CRM stays in place; the input layer flips conversational.

Phase 2 — Make conversations the primary record for one customer cohort (weeks 5–12). Pick one customer cohort — usually new customers in the first 90 days, or top-ARR accounts — and make their interaction history conversational by default. Interviews on signup, week-2 health check, month-2 expansion discovery, and month-3 NPS-with-the-why. The conversational corpus becomes the durable artifact for that cohort; the CRM stores derived fields.

Phase 3 — Stand up the conversational corpus as a queryable layer (months 4–6). Once you have a few thousand transcripts across the journey, build the corpus as a first-class data layer that PMs, CS, and CX can query directly. Adopt the AI-first customer feedback analysis workflow to replace synthesis-by-spreadsheet. This is when you stop running ad hoc surveys and start re-querying existing conversations.

Phase 4 — Deprecate redundant capture surfaces (months 6–9). With the corpus in place, identify forms, surveys, and ticket fields that have become redundant — questions you're asking in three places that the corpus already answers. Kill the duplicates. Most teams find 30–50% of their structured-capture surface area is now redundant, which removes friction from the customer side and synthesis cost from the team side. The AI survey alternative and replacing surveys with AI playbooks cover the survey-side cuts in detail.

Phase 5 — Invert the org chart of who runs engagement (months 9–12). The final phase is organizational, not technical. CRM-centric engagement assumes a rep-and-tooling org: SDRs, AEs, CSMs, support agents, each typing into their slice of the schema. Conversation-centric engagement assumes a smaller core team running interviewer agents at much higher volume, with humans focused on the conversations that genuinely require human judgment. According to McKinsey's 2024 State of AI report, 65% of organizations now regularly use generative AI — but the high-performing minority are the ones restructuring workflows around it, not just adding it as a layer.

Objections handled

The opinion above tends to provoke four predictable objections. They are worth addressing directly because they are the reason most engagement teams stay bolt-on for one quarter too long.

"We already have a CRM and an AI agent — isn't that AI-native?" No. Having an AI agent that drafts emails or summarizes calls inside Salesforce, HubSpot, or Zendesk is the textbook bolt-on pattern. The CRM is still the schema; the AI is a productivity layer for reps typing into the schema. AI-native means the conversation is the schema. If your "AI agent" cannot run a 10-minute discovery interview unsupervised and write the conversation back as the primary record, it's a copilot, not an engagement system.

"Customers won't talk to AI — they want a human." This is empirically false at the levels of structure most engagement requires. The Salesforce State of the Connected Customer report found 61% of customers prefer self-service for simple issues. The friction customers reject is bad bots — scripted, can't-handle-uncertainty, won't-escalate. AI interviewers that can feel human when it matters get response rates 3-5x higher than equivalent surveys.

"We need structured data for our reports." You still get structured data — derived from the corpus on-demand, against any schema you want, with the option to re-derive when the schema changes. What you stop having is structured data that's a permanent ceiling on what you can ask.

"This sounds expensive." It is the opposite. Bolt-on stacks accumulate per-seat licenses across CRM, survey tool, chatbot, ticketing, NPS tool, churn-prediction tool, and survey-analysis tool — and each one needs its own integration, admin, and synthesis budget. A conversational corpus replaces three to five of those line items. Our customer research costs piece walks through the unit economics.

Frequently Asked Questions

What is AI-native customer engagement?

AI-native customer engagement is engagement architecture in which the customer conversation is the primary, durable record — and structured fields like CRM rows, ticket tags, and survey scores are derived views over that conversational corpus. It is distinct from bolt-on AI engagement, where AI is layered on top of a forms-and-fields CRM as a productivity assistant. AI-native means the system is conversational by default, end-to-end across the journey.

How is AI-native engagement different from a chatbot or AI agent?

AI-native engagement is different from a chatbot or AI agent in two ways: durability and scope. A chatbot answers a question and disappears; an AI-native engagement system runs structured conversations across the entire journey — intake, onboarding, expansion, churn, renewal — and stores every transcript as the primary record. Chatbots are tactical deflection; AI-native engagement is the substrate that replaces the CRM-as-schema model.

Do I have to replace my CRM to go AI-native?

No, you do not have to replace your CRM to go AI-native — but you do have to invert which system is the source of truth. The conversational corpus becomes the durable artifact, and the CRM stores derived fields synced from the corpus. Many teams keep their CRM for pipeline and forecasting and adopt a conversational layer for capture and discovery. The migration path above covers this in five phases.

What teams benefit most from AI-native engagement?

The teams that benefit most from AI-native engagement are product, customer success, and CX research teams that currently bottleneck on synthesis — they are doing the most damage with bolt-on stacks and have the most to gain from a re-queryable corpus. Built for CX teams and built for product teams covers role-specific use cases, including continuous discovery, NPS-with-the-why, and churn-driver analysis.

How do I know if my current stack is AI-native or bolt-on?

You know your current stack is AI-native if you can run this test: pick a question you have not asked before — for example, "how often did customers cite SSO as a friction point in the last 90 days" — and answer it in under 10 minutes by querying transcripts. If you can, you're AI-native. If you have to add a new survey, wait six weeks for responses, and synthesize manually, you're bolt-on. The architecture test is whether the corpus answers questions the original schema didn't anticipate.

Conclusion

AI-native customer engagement is not a feature on top of your existing stack. It is a different stack — one where the conversation is the durable record and structure is a derived view. Bolt-on engagement, where AI is a productivity layer over a forms-and-fields CRM, has a permanent ceiling that no amount of additional model capability will raise. The teams that hit that ceiling and treat it as an architectural problem rather than a tooling problem are the ones operating in 2027 with continuous discovery, real churn-driver analysis, and a single queryable voice-of-customer corpus.

The migration is incremental, not all-or-nothing. Start with one capture surface, prove the corpus pays back, and progressively deprecate the redundant pieces of the legacy stack. Within 12 months, the engagement function looks fundamentally different — fewer survey tools, fewer dashboards built on lossy summaries, dramatically more customer truth captured per quarter.

If you want to see what conversation-centric engagement looks like in practice, start a research project with the Perspective interviewer agent, browse recent customer studies, or compare our pricing against the bolt-on stack you'd otherwise renew. The engagement stack needs to be rebuilt, not bolted on — and the rebuild is more practical than the renewal.

More articles on AI Conversations at Scale

Human-Like AI Interviews: What Makes Conversational AI Feel Human (And When It Shouldn't)

AI Conversations at Scale · 14 min read

Replace Focus Groups With AI: The Paradigm Shift Research Leaders Can't Ignore in 2026

AI Conversations at Scale · 12 min read

SurveyMonkey Alternative: Why 2026 Product Teams Are Switching to AI Conversations

AI Conversations at Scale · 13 min read

Synthetic Focus Groups: Why Fake Respondents Can't Replace Real Customer Research

AI Conversations at Scale · 14 min read

'Human-Like' AI Interviews Aren't the Goal — Here's What Is

AI Conversations at Scale · 13 min read

The Glasswing Principle: Why Your Customer Feedback Tools Have the Same Blind Spot

AI Conversations at Scale · 12 min read