•15 min read

Product Discovery Research: The Continuous Discovery Stack for AI-First Product Teams

TL;DR

Product discovery research is the practice of continuously talking to customers to decide what to build, why, and for whom — and in 2026 it runs on an AI-first stack, not a researcher's calendar. Teresa Torres' Continuous Discovery Habits framework defines the modern bar: weekly touchpoints with customers, an opportunity solution tree connecting interviews to bets, and assumption tests before commitments. The historical bottleneck was throughput — a single PM could run 4–8 interviews per week, and synthesis ate another full day. AI-first teams break that ceiling with conversational AI moderators, automated transcript analysis, and self-serve research outline tooling. Recommended stack: an AI interviewer for live discovery (Perspective AI), a lightweight opportunity solution tree tool (FigJam, Mural, or Miro), and a shared research repo. Weekly cadence: six to ten conversations, one synthesis review, one tree update, one assumption test. Teams running this rhythm cut discovery cycle time from quarters to days and ship roadmaps customers actually asked for.

What is product discovery research?

Product discovery research is the ongoing practice of interviewing customers and prospects to identify unmet needs, validate problems, and pressure-test solutions before engineering commits a single sprint. It differs from one-off user research projects by being continuous — embedded into the product team's weekly rhythm rather than commissioned ad hoc — and from delivery-focused validation by happening upstream, before the roadmap is locked. Teresa Torres formalized the modern version of this practice in her 2021 book Continuous Discovery Habits, which argues that product trios (PM, designer, engineer) should be talking to customers at least weekly and using those conversations to populate an opportunity solution tree.

In 2026, the practice itself hasn't changed. The throughput has. AI-first product teams now run discovery as a continuous, AI-moderated stream — moving from "we talked to eight customers last quarter" to "we talked to eighty customers last week." The rest of this guide is a practical stack and cadence template for building that habit.

What continuous discovery looks like in practice

Continuous discovery is a weekly rhythm, not a quarterly initiative. Each week, a product trio talks to at least one customer (Torres' minimum bar), updates an opportunity solution tree based on what they heard, picks one assumption to test, and runs that test before next week's review. The output isn't a research deck — it's a living artifact that connects every customer voice to a specific opportunity, a specific solution bet, and a specific experiment.

Three properties separate continuous discovery from traditional user research:

- Cadence is fixed; scope is fluid. Weekly touchpoints happen no matter what's on the roadmap. You don't pause discovery to "focus on shipping."

- The team owns it, not a researcher. PMs, designers, and engineers run interviews themselves. Centralized research teams support and review — they don't gatekeep.

- Decisions, not insights, are the unit of output. Every interview should move a node on the opportunity solution tree closer to a build/no-build call.

For a deeper walkthrough of the operational mechanics, see our companion piece on operationalizing Teresa Torres' framework with AI conversations.

The traditional discovery cadence (and why it broke)

The traditional product discovery cadence was scheduled, scarce, and slow. A typical pre-2024 sequence:

- Quarterly research planning. PMs filed research requests against a centralized UXR team's roadmap. Wait time: 4–8 weeks.

- Recruitment. A research ops person pulled a panel of 6–8 participants matching a screener. Wait: 1–2 weeks per study.

- Live moderated interviews. A researcher ran 30–60 minute Zoom sessions, one per participant. Throughput: 4–8 sessions per week, max.

- Manual synthesis. Transcripts were tagged by hand or with semi-manual coding tools. Wait: 1–2 weeks.

- Readout. A 30-slide deck delivered to the PM, who'd read the executive summary and move on.

End-to-end cycle time: 6–12 weeks per study. By the time the deck landed, the roadmap had moved on. Even the best research teams could only support 4–6 active studies at a time, capping a 200-PM org at roughly one study per PM per year.

This is why most product orgs talked a great game about being customer-centric while shipping features no one asked for. The math didn't work. As Nielsen Norman Group has documented for years, even five-user tests catch most usability issues — but five users still take weeks to recruit and synthesize under traditional ops. The constraint wasn't sample size. It was throughput. Industry data agrees: ProductPlan's 2024 State of Product Management report found product managers spend a small fraction of their week on customer conversations, with most time consumed by stakeholder meetings and delivery coordination.

The AI-first discovery stack

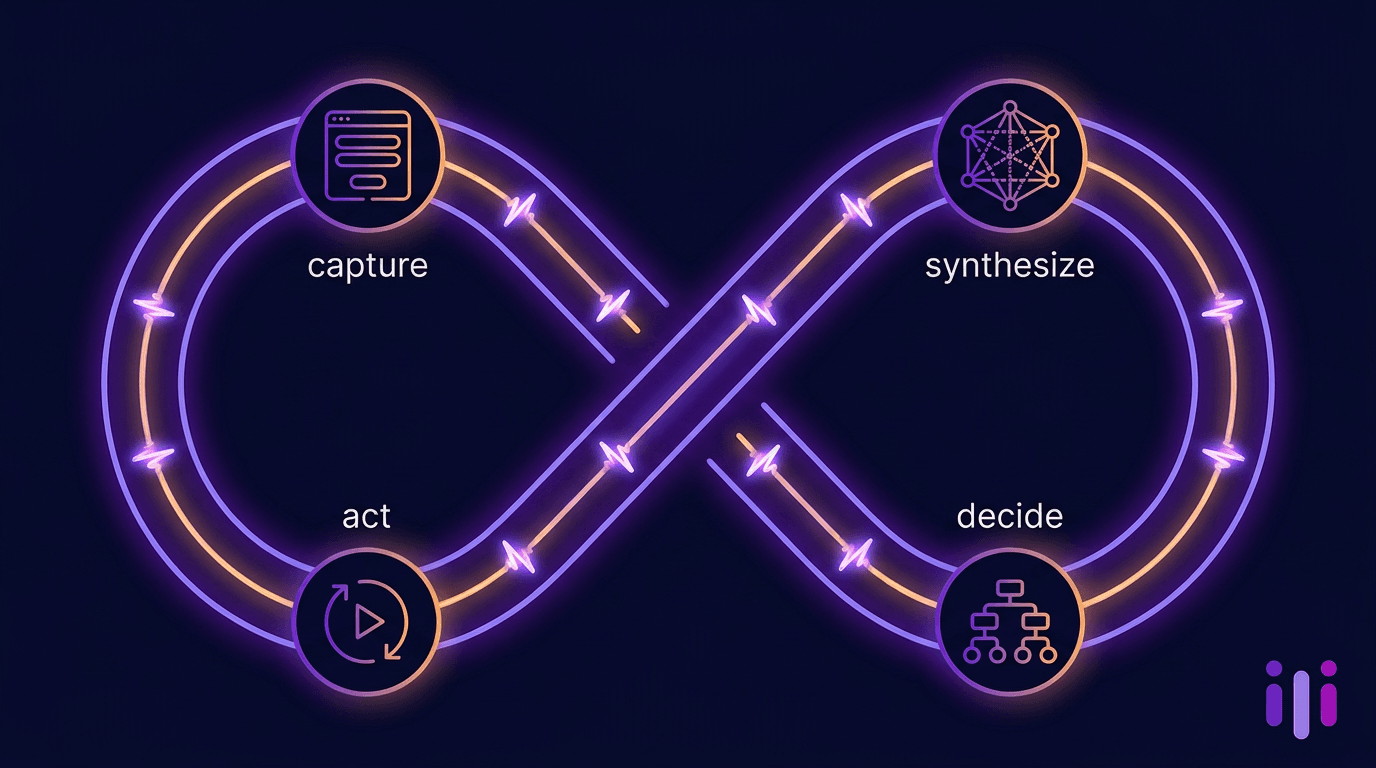

The AI-first discovery stack has three layers — capture, synthesize, decide — and each has a clear job. The point isn't to replace the human trio. It's to remove every operational drag between a customer voice and a roadmap decision.

Layer 1: Capture (AI-moderated interviews)

The capture layer is an AI interviewer that runs structured-but-conversational sessions on demand. Instead of a researcher manually running 6 Zoom calls per week, the team launches a discovery study and routes 60+ participants through it asynchronously. Perspective AI's AI interviewer agent handles this layer for product teams — it follows up on vague answers, probes for context, and captures the "why now" that surveys flatten. Voice and text modes are both supported.

The non-negotiable property of this layer is probing depth. A capture tool that doesn't follow up — that just records form-style answers — isn't a discovery tool. It's a survey with extra steps. We've written about why conversational data collection beats forms for discovery in detail; the same principles apply to AI moderators.

Layer 2: Synthesize (automated transcript analysis)

The synthesize layer turns raw transcripts into themes, quotes, and decision-ready summaries — automatically. This is where AI-first stacks compress weeks into hours. Modern transcript analysis clusters mentions of jobs, pains, and gains; surfaces direct quotes; and generates structured summaries mapped to your discovery questions.

For Perspective AI users, this is the Magic Summary report layer — an automatic synthesis pass that runs the moment a session completes. Output: a per-conversation summary, a cross-conversation theme map, and quote extraction tagged to the opportunity solution tree node it informs.

Layer 3: Decide (opportunity solution tree + assumption tests)

The decide layer is still mostly human — and that's correct. AI is good at capturing and synthesizing; humans are good at deciding what to bet on. The opportunity solution tree from Torres' framework is the artifact that anchors this layer: customer outcome at the top, opportunities (problems/needs) below it, solutions branching from each opportunity, and assumption tests beneath each solution.

Tools for the tree itself are deliberately lightweight — FigJam, Mural, Miro, or even a shared Notion doc. The point is that the tree is updated weekly from the synthesize layer's output, and every solution branch has an assumption test attached before any engineering happens. For high-stakes bets, AI product roadmap validation lets you pressure-test assumptions in hours instead of running a full study.

How the layers connect

A team running this stack moves from "research request → 8-week wait → deck" to "discovery question → 48-hour insight → opportunity-tree update."

Weekly cadence template

Below is the cadence template AI-first product teams use. It assumes a product trio (PM, designer, engineer) and a target of 6–10 customer conversations per week. Adapt as needed, but don't dilute the weekly minimum — the whole point is that customer signal arrives every week, not in bursts.

Monday — Set the discovery question

The trio meets for 30 minutes to pick the week's discovery question. The question must tie to a node on the opportunity solution tree. Examples:

- "Why did mid-market accounts that downgraded last quarter actually leave?"

- "When users hit our integrations page and bounce, what were they trying to do?"

- "What does the post-onboarding 'second-week' job look like for power users?"

The question becomes the brief for the AI interviewer. Use a research outline builder to convert it into 6–10 structured-but-open questions that the AI moderator will adapt to each conversation.

Tuesday — Launch the study

The PM launches the study and routes participants. Sources include in-product invitations (best for current users), email lists (best for at-risk or churned cohorts), and recruited panels (best for prospects). For onboarding-stage signals, embed the AI interviewer directly into the in-app onboarding flow — see AI-native onboarding for the architectural pattern.

Target: 60+ invites, expecting 6–10 completed interviews by Thursday. Async AI interviews complete at roughly a 15–25% rate without incentives, much higher with them.

Wednesday — Capture runs; team works on builds

While capture runs in the background, the trio executes on existing roadmap commitments. No discovery meetings on Wednesday — protect maker time.

Thursday — Read the synthesis output

By Thursday morning, automated synthesis has produced a theme map and quote pull from the week's conversations. The trio spends 60 minutes reading the output together. The job is pattern recognition, not deep analysis — what's repeating? what surprised us? what new opportunity-tree node does this suggest?

Friday — Update the tree, pick the next assumption test

The trio meets for 60 minutes to update the opportunity solution tree based on Thursday's read. New opportunities get added; old ones get re-prioritized. The trio picks one assumption beneath the current top opportunity to test next week — by interview, prototype review, fake-door experiment, or whatever the assumption demands.

The output of every Friday is one updated tree and one assumption-test brief. That's the unit of progress.

What weekly looks like over a quarter

Twelve weeks of this cadence produces 72–120 customer conversations, 12 updated opportunity solution trees, 12 assumption tests, and 1–3 validated bets ready for engineering commitment. Compare to the traditional cadence: 1–2 studies, one deck, no validated bets.

Tools and roles

The tooling decisions matter less than the cadence, but a few choices are load-bearing. Below is the stack we recommend for AI-first product teams in 2026.

Capture — Perspective AI for async discovery, in-product onboarding interviews, and at-risk customer interviews. Voice and text modes; native research outline builder for non-researcher PMs. For a broader market view, see our user interview software comparison and AI UX research tools guide.

Synthesize — Perspective AI Magic Summary for automatic per-session and cross-session synthesis. The AI-first qualitative research playbook covers when synthesis automation works and when it doesn't.

Decide — FigJam, Mural, or Miro for the opportunity solution tree (pick whichever your team already uses). Linear, Notion, or Confluence for the assumption-test log. Each test should have an explicit prediction, a method, and a kill-criterion.

Roles. The roles that matter aren't job titles — they're responsibilities. The product trio (PM, designer, engineer) jointly owns the tree and the cadence. UX research, when it exists as a discipline, owns method quality, study templates, and synthesis review for high-stakes bets. Engineering leadership protects the trio's discovery time against delivery pressure — this is the role that fails most often, and it's why most discovery programs collapse.

For research-leadership readers, UX research at scale and customer research at scale cover how the centralized research function shifts when product trios run their own discovery.

Common failure modes

The cadence is simple. Execution fails in predictable, recurring ways. The most common failure modes:

1. Discovery gets paused for "shipping focus." A delivery deadline approaches; the trio cancels Monday's discovery question; weeks become months; the cadence dies. Fix: make the weekly cadence a non-negotiable team ritual, like standup. Engineering leadership owns this.

2. AI interviews replace, instead of augment, the trio's direct customer contact. If only the AI moderator ever talks to customers, the trio loses the texture and surprise that direct conversations create. Fix: every team member personally reads at least three full transcripts per week, not just summaries.

3. Insights without decisions. A research deck is delivered. Heads nod. Nothing changes. Fix: tie every Thursday read to a Friday tree update. The unit of weekly output is one updated tree and one new assumption-test brief.

4. Outcome-free opportunities. Teams populate the tree with opportunities that aren't connected to a measurable outcome at the top. Fix: pick one outcome per quarter (e.g., "increase second-month retention from 62% to 70%") and force every opportunity on the tree to plausibly connect to it.

5. Skipping assumption tests. The team decides to build something based on three customer conversations. No fake-door test, no prototype review, no validation. Fix: every solution branch has an assumption test attached before engineering commits. For sprint-sized bets, a feature prioritization framework explicitly forces an assumption test as a gate.

6. One PM running the whole thing. Continuous discovery is a trio practice. If only the PM does it, the designer and engineer never internalize the customer voice. Fix: all three trio members read transcripts and attend the Friday tree update; designers and engineers can run interviews directly with the AI moderator's outline as a backbone.

Frequently Asked Questions

How is product discovery research different from user research?

Product discovery research is upstream and continuous; user research is often downstream and project-based. Discovery happens before a roadmap commitment — it's how the team decides what's worth building. User research, in its narrower sense, often happens during or after a build to validate or evaluate a specific design. Modern product orgs blur the line, with discovery research happening continuously and user research handling specific evaluative studies.

How many customer interviews per week is enough for continuous discovery?

Teresa Torres' floor is one customer conversation per week per product trio. The realistic AI-first target is six to ten interviews per week, because the marginal cost of additional interviews drops to near-zero with an AI moderator. Six to ten gives you enough cross-conversation pattern density to update the opportunity solution tree meaningfully each week. Below three the signal is too noisy to act on; above twelve, synthesis quality degrades unless you tighten the discovery question's scope.

Can AI moderators really replace human interviewers for product discovery?

AI moderators replace human interviewers for the capture layer of routine discovery — async interviews, in-product moments, and at-scale recruitment. They do not replace human judgment for high-stakes bets, sensitive populations, or exploratory work where the trio needs to be in the room learning to ask better questions. The right model: AI runs the volume, humans run the conversations that matter most and read the transcripts of the rest.

What's the difference between continuous discovery and Jobs-to-be-Done?

Continuous discovery is a cadence and decision framework; Jobs-to-be-Done is a theory of customer behavior. They're complementary. JTBD gives you the lens for interpreting what customers tell you (jobs, forces, switching moments). Continuous discovery gives you the rhythm for collecting that signal weekly and turning it into roadmap bets. Many AI-first teams use JTBD-style prompts inside a continuous discovery cadence — see our Jobs-to-be-Done interviews guide for product teams for the prompting patterns.

How long does it take to get continuous discovery working?

Most teams need 6–8 weeks to make the cadence stick. Weeks 1–2 are tooling setup and the first end-to-end run. Weeks 3–4 expose the failure modes — synthesis backlogs, missed Friday meetings, opportunity-tree neglect. Weeks 5–6 are when the trio internalizes the rhythm and stops needing to be reminded. By week 8, the team produces one validated bet per month and the rhythm runs itself. The variable is leadership air-cover, not tooling.

Do product discovery research findings replace data analytics?

No — they complement it. Analytics tells you what is happening (drop-off rates, feature adoption, retention curves). Product discovery research tells you why — the context, the alternative the customer almost picked, the constraint they didn't tell you about. The strongest AI-first product teams pipe analytics signals (e.g., a spike in onboarding drop-off at step 4) into a discovery question (e.g., "interview 30 users who dropped at step 4"), then close the loop by re-watching the metric after shipping.

Conclusion

Product discovery research, done as Teresa Torres defines it, has always been the right practice. The AI-first stack is what finally makes the cadence affordable. By collapsing the capture layer with AI-moderated interviews and the synthesize layer with automatic transcript analysis, AI-first product teams can sustain a true weekly continuous discovery rhythm — six to ten conversations, one synthesis review, one tree update, one assumption test — without burning out a UXR team or stalling delivery.

The pattern that wins in 2026: weekly cadence, lightweight tools for the decide layer, AI for the capture and synthesize layers, and a product trio that holds itself accountable to the rhythm. Teams that adopt it ship roadmaps customers asked for; teams that don't keep shipping features that miss.

If you're ready to operationalize continuous discovery research with AI-first tooling, start a discovery study with Perspective AI — set the question, route participants, and have a synthesis-ready theme map within 48 hours of launch.

More articles on AI Conversations at Scale

AI Focus Group Analysis: From Raw Transcripts to Strategic Insights in Hours, Not Weeks

AI Conversations at Scale · 15 min read

AI Focus Group Research: The Use Case Playbook for Product, CX, and Marketing Teams

AI Conversations at Scale · 15 min read

AI for Customer Success: The 2026 Playbook for CS Teams Running on AI Conversations

AI Conversations at Scale · 14 min read

AI-Moderated Focus Groups: How Conversational AI Replaces the Clipboard Moderator

AI Conversations at Scale · 13 min read

AI-Moderated Interviews: The Mechanics of Good AI Interviewing in 2026

AI Conversations at Scale · 19 min read

AI Qualitative Research: How Conversational AI Makes Qualitative the Default, Not the Luxury

AI Conversations at Scale · 13 min read