•16 min read

How to Reduce Customer Churn in SaaS: A 2026 Operational Playbook

TL;DR

To reduce customer churn in SaaS in 2026, stop tuning health-score dashboards and start running structured conversations at the four moments that actually move net revenue retention (NDR): onboarding stalls, health-score downgrades, the renewal window, and expansion gates. The math is unforgiving — at 3% monthly gross churn, you lose 30% of cohort ARR each year before any expansion, and Bessemer's 2024 State of the Cloud benchmarks place top-quartile public SaaS NDR at 120%+ versus a median that has dropped below 110% post-2023. Telemetry tells you who is churning; it almost never tells you why in time to save the account. The operational playbook below routes telemetry signals into AI-moderated customer conversations, then back into CS plays — onboarding intervention, health-triggered interviews, renewal-motion redesign, and expansion gating. Treat NDR like a product surface, not a CS KPI: every lever in this guide is owned by a human, instrumented in product, and triggered by a conversation. SaaS retention compounds. So does the cost of running the same playbook everyone else is running.

Why SaaS Churn Math Is Different

SaaS churn math punishes inaction faster than any other business model because the revenue you're protecting is recurring, contracted, and benchmarked publicly. A 1-point drop in net dollar retention compounds against next year's ARR forecast and against your valuation multiple at the same time.

The three numbers every SaaS retention program runs against:

- Gross Revenue Retention (GRR) — starting ARR minus churn and downgrades, divided by starting ARR. Best-in-class B2B SaaS sits at 90%+; below 80% is a structural problem, not a CS staffing problem.

- Net Dollar Retention (NDR / NRR) — GRR plus expansion. Public SaaS leaders (Snowflake, Datadog, Cloudflare in their peak years) cleared 130%+. The post-2023 reset has compressed median NDR for B2B SaaS into the 105–110% band, per the Bessemer State of the Cloud 2024 report.

- Logo churn vs. dollar churn — they tell different stories. SMB-heavy ARR books often show 4%+ monthly logo churn but flat dollar churn because expansion in mid-market accounts hides it. Both must be reported.

If you're running a SaaS retention program in 2026 without weekly visibility into NDR cohorts by segment, by acquisition channel, and by ICP fit, you're optimizing in the dark. That's where most "we need to reduce churn" conversations actually start — with a dashboard, not a diagnosis.

Why Standard SaaS Churn Playbooks Fail

Most standard SaaS churn playbooks fail because they're telemetry-first and conversation-last. The prevailing pattern goes: build a health score from product usage and ticket volume → set thresholds → page a CSM when an account drops red → CSM books a call → account churns anyway. The breakdown isn't in the score. It's that the score detects the symptom (declining usage) without ever surfacing the cause (a stalled internal champion, a competing product they're piloting, an unmet integration need that quietly killed adoption two quarters ago).

The standard playbook makes three assumptions that don't hold up in 2026:

- That a CSM has time to talk to every red-flagged account. With ratios of 1 CSM to 50–150 accounts in scaled CS orgs, that's not a staffing problem you can hire your way out of. See scaled customer success in 2026 for the headcount math.

- That accounts will tell you why they're churning if you ask. They won't, especially in a 30-minute Zoom with their account manager. Voluntary churn surveys average 10–15% response rates and skew to the loudest 5% of customers.

- That telemetry can substitute for context. It can't. Usage drops because of vacations, hiring freezes, reorgs, parental leaves of the champion, and a hundred other things that aren't churn signals. Why customer dashboards don't show the real reasons accounts churn walks through the false-positive problem in detail.

The fix isn't more dashboards. It's a diagnostic loop that converts telemetry signals into conversations, conversations into context, and context into routed action.

The Diagnostic Loop: From Data to Conversation to Action

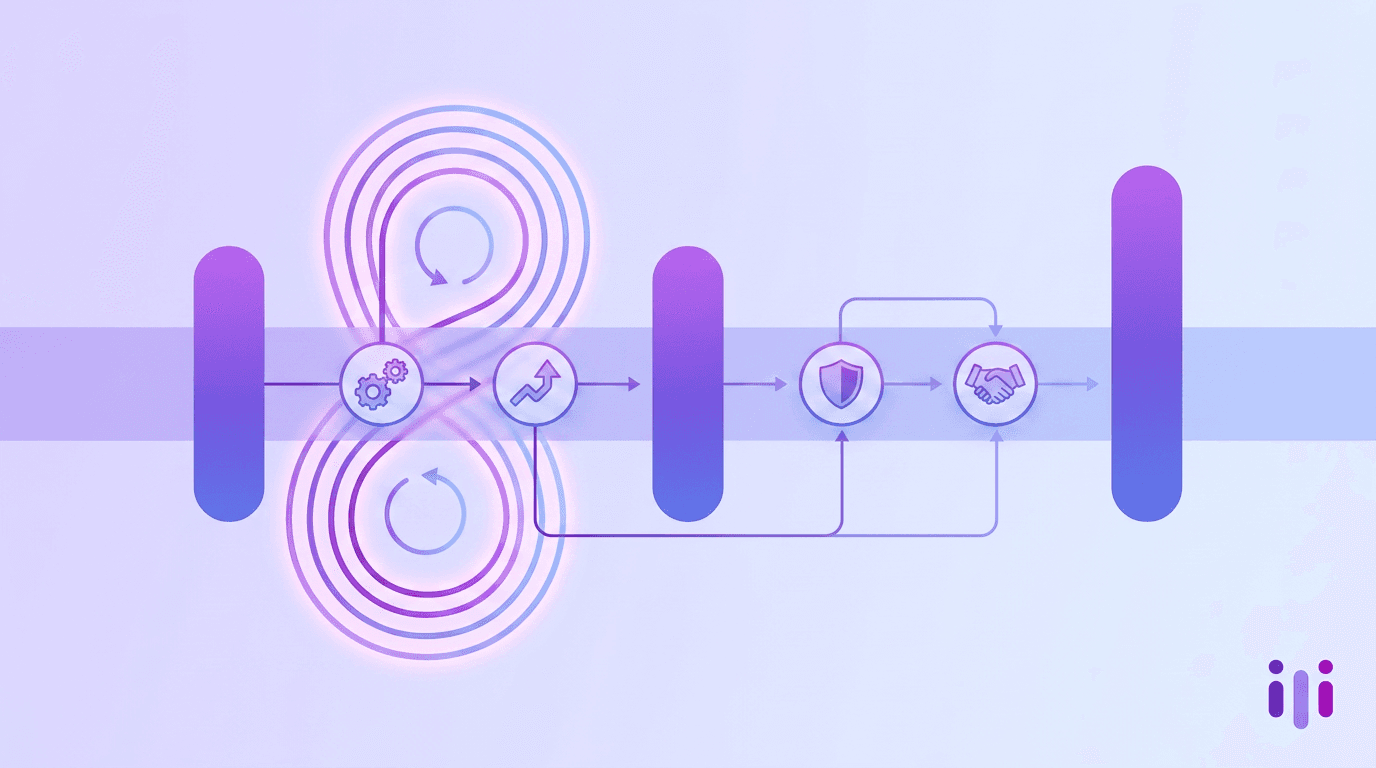

The diagnostic loop is the operating model that connects product telemetry to CS plays through structured conversations. It runs continuously across four lifecycle moments rather than firing once a quarter as a "voice of customer" survey project.

The loop has four steps:

- Signal — A telemetry event fires: a feature adoption drop, an NPS detractor score, a support ticket trend, a renewal-window flag, an executive sponsor change in the CRM.

- Conversation — The signal triggers a structured AI-moderated interview routed to the right contact at the account. Five to twelve minutes, asynchronous, with follow-up probes on vague answers. This replaces the "CSM tries to book a call" step that already breaks at scale.

- Synthesis — Conversation responses are auto-analyzed and tagged against churn-driver categories (product fit, integration gaps, ROI uncertainty, sponsor loss, competitive pressure, pricing).

- Routing — The synthesized cause routes to the right play: a CSM saves an at-risk account, product files a roadmap signal, RevOps adjusts the renewal motion, sales blocks an expansion that would have padded next quarter and accelerated churn the quarter after.

That last point is where most SaaS churn programs leak the most value. Saving accounts after they're already at risk is the lowest-leverage step in the loop. The higher-leverage moves are upstream: better onboarding, better expansion gating, and a renewal motion that doesn't surprise anyone.

Perspective AI's AI interviewer agent is designed for exactly this signal-to-conversation handoff — taking a CRM or product event as a trigger, running the interview, and returning structured insight that a CSM or PM can act on. See AI-enabled customer engagement for the broader architecture.

Lever 1: Onboarding Intervention

Onboarding intervention is the highest-leverage churn-reduction lever in SaaS because the first 14–60 days set the activation outcome that predicts 12-month retention. McKinsey's 2023 SaaS benchmarks repeatedly show that customers who don't reach activation by day 30 churn at 3–5x the rate of activated customers regardless of contract length.

The standard onboarding intervention pattern in 2026:

- Day 0–7 — Intent capture, not just account setup. Replace your onboarding form with a conversational intake that captures the specific outcome the customer bought you for, the named blocker they expect to hit, and the internal stakeholder list. See conversational intake AI for the pattern.

- Day 7–14 — Activation gate, not just feature checklist. Define one binary activation event per ICP segment ("imported their first dataset," "ran their first report," "connected their CRM") and instrument an AI-moderated check-in if it doesn't fire by day 14.

- Day 30 — Outcome interview, not just NPS. A scored NPS prompt at day 30 is a cosmetic measurement. A 7-minute conversational interview that asks what the customer expected to be doing by now, what they're actually doing, and what's blocking the gap is a leading indicator of renewal probability.

- Day 60 — Stakeholder breadth check. Single-threaded accounts churn at 2x the rate of multi-threaded accounts in mid-market SaaS. Use the day-60 conversation to surface other stakeholders, then route them to product-specific intros.

Most AI-native onboarding tools aren't actually native — they're tour overlays bolted onto static product documentation. The 2026 default is conversational onboarding that adapts to the customer's stated outcome. The architecture test for AI-native customer engagement lays out the disqualifiers.

Lever 2: Health-Score-Triggered Conversations

Health-score-triggered conversations replace the broken "page a CSM when score drops" model with an automated interview that captures the why behind a usage decline before a human ever gets involved. The CSM still runs the save play — but they show up to the call with context, not a discovery list.

Here's the trigger architecture that works:

The critical design choice: the conversation runs first, then routes to a human play with the context already captured. This is the inverse of the legacy pattern where a CSM tries to book a call cold and either gets ghosted or runs an undirected discovery session.

For the underlying score logic, customer health score automation in 2026 covers how to build a health score that actually triggers the right conversation rather than just dashboarding a number. For digital-touch motions where the CSM doesn't take every call, see digital-touch customer success in 2026.

Lever 3: Renewal-Motion Redesign

Renewal-motion redesign is the most underrated SaaS churn lever because most B2B SaaS companies still run renewals like a procurement event instead of a continuous validation program. The renewal call shouldn't be the first time anyone at the customer hears "are you getting value." It should be the closing summary of a 90-day signal-gathering window.

The redesigned 2026 SaaS renewal motion:

- T-120 days: Champion stability check. Run a brief conversational interview with the primary champion. Are they still in role? Still funded? Still the budget owner? Sponsor turnover at this stage is the single highest predictor of unmanaged churn.

- T-90 days: Outcome retrospective. A 10-minute AI-moderated interview asking what the account hoped to achieve when they bought, what they actually achieved, what's still missing. This produces the renewal narrative the CSM will use on the call.

- T-60 days: Multi-stakeholder breadth interview. Beyond the champion, who else uses the product, who else benefits, who would feel the loss if the contract didn't renew. This is the expansion conversation in disguise — and it's also the audit on single-threaded risk.

- T-30 days: Procurement and competitive pressure check. Conversational, not a written form. Are they evaluating alternatives. What would a competitor have to deliver to win the seat. What's the procurement timeline.

- T-0: The call itself becomes a confirmation, not a discovery session.

This is the pattern that distinguishes 100%+ NDR teams from 85% GRR teams. The case for replacing surveys with AI applies here directly — a static renewal survey 30 days out gets a 12% response rate and tells you nothing actionable. A conversation gets 60–80% response and tells you what's wrong while there's still time.

For the customer-success angle on this same architecture, see reduce customer churn with Perspective AI.

Lever 4: Expansion Gating

Expansion gating is the SaaS churn lever everyone skips because it shows up as a top-line headwind in the current quarter — and as a churn save in the quarter after. The pattern: sales pushes seat expansion or upsell into accounts that haven't activated the original purchase, the customer signs because the deal looks good, and three to six months later the entire account churns instead of the original cohort renewing flat.

A working expansion gating policy looks like this:

- No expansion before activation. If the original cohort hasn't hit the activation gate from Lever 1, expansion is blocked. This is a CRM-enforced rule, not a CSM judgment call.

- Health-score floor for expansion qualification. Accounts below the green threshold can renew, but cannot expand. Letting a yellow account expand turns next quarter's renewal into next quarter's churn.

- Stakeholder breadth requirement. Multi-product or multi-team expansion requires named stakeholders for each team. Single-threaded expansions churn at 2.5x the rate of multi-threaded ones.

- Conversational fit-check before contract. A 5-minute interview with the expansion buyer covering use case, success criteria, and timeline. This is the same model Perspective uses for win/loss interviews — applied to deal qualification instead of post-close analysis.

Expansion gating is where finance, sales, CS, and product genuinely have to align. The teams that get this right report 8–12 point NDR improvement within four quarters because they stop manufacturing churn through over-expansion.

For the broader product feedback loop that supports this, feature prioritization without the guesswork shows how the same conversation engine feeds the roadmap.

The 30-60-90 Day Implementation Plan

The 30-60-90 day plan to operationalize this playbook assumes you already have a CRM, a product analytics tool, and either an existing CS function or a founder-led customer relationship layer. If you don't, churn isn't your top problem yet.

Days 1–30: Diagnose, don't deploy.

- Pull NDR, GRR, and logo churn for the last four quarters, segmented by ICP, by acquisition channel, and by contract length.

- Run 15–20 customer interviews — half with churned accounts from the last six months, half with high-NDR expanders. Use a structured script that probes activation, sponsor stability, and unmet expectations. The JTBD interview format is the right scaffold.

- Tag every interview against the four levers. The dominant cause cluster is your starting lever. Don't pick the lever you wish was the problem — pick the one the data picks.

Days 31–60: Instrument and pilot one lever.

- Wire telemetry triggers for the chosen lever into your conversation tool. For most B2B SaaS in the 50–500 employee band, Lever 2 (health-score conversations) ships first because it has the fastest signal-to-action loop.

- Pilot with one segment — typically the highest-ARR cohort or the segment with the worst current NDR.

- Define a single success metric: net save rate (saves / at-risk accounts triggered) at 90 days.

Days 61–90: Expand and harden.

- Roll the lever to a second segment if the pilot exceeded baseline save rate.

- Layer the next lever — typically Lever 1 (onboarding intervention) — because it produces the best LTV improvement per dollar of CS effort.

- Set up the quarterly NDR review with executive ownership outside the CS function. Retention is a company KPI in 2026, not a CS-team KPI.

The 2026 buyer's guide to AI-enabled customer engagement software covers tool selection criteria for each of these capabilities. For a unified VoC view that feeds all four levers, see the complete guide to voice of customer programs in 2026.

Frequently Asked Questions

What is a good SaaS churn rate in 2026?

A good SaaS churn rate in 2026 depends on segment: best-in-class B2B SaaS targets gross logo churn under 5% annually for mid-market and enterprise accounts, and under 1% monthly for SMB-heavy ARR books. Net dollar retention should clear 110% to be considered healthy and 120%+ to be considered top-quartile, per Bessemer State of the Cloud benchmarks. Pure SMB SaaS books often see 3–5% monthly logo churn even at high quality — the offset comes from acquisition velocity and expansion within the surviving cohort.

How do you reduce customer churn in SaaS without adding headcount?

You reduce customer churn in SaaS without adding headcount by replacing the "CSM books a call" pattern with telemetry-triggered AI-moderated conversations that capture context before a human gets involved. The CSM still runs the save play — but they only run it when the conversation has surfaced a savable cause, and they show up with the cause already identified. This is how scaled CS orgs at 1:100 ratios outperform legacy 1:30 ratios on net save rate. Scaled customer success without adding headcount covers the staffing math.

What's the difference between NDR and GRR for SaaS churn?

The difference between NDR and GRR is that gross revenue retention (GRR) measures only churn and downgrades, while net dollar retention (NDR) adds expansion. GRR can never exceed 100% because it strips out upsell and cross-sell, so it isolates how well you're holding the original ARR. NDR can exceed 100% when expansion outpaces churn, which is what every public SaaS investor cares about. Both metrics are required: NDR alone hides churn under expansion, GRR alone misses the compounding value of land-and-expand motions.

How do AI customer interviews actually reduce churn?

AI customer interviews reduce churn by closing the gap between a churn signal firing in your telemetry and a human understanding the cause behind it. A traditional CS workflow takes 7–14 days to convert a red-flagged account into a structured conversation, by which point the customer has often already mentally moved on. AI-moderated interviews trigger within hours of a signal, run asynchronously at the customer's convenience, probe vague answers in real time, and return structured cause data that routes to the right play. The result is faster save cycles, higher save rates, and CS time spent on accounts that can actually be saved. See why your VoC program isn't telling you the full story.

Should we build or buy our SaaS retention program?

You should buy the conversation and analytics layer and build the routing logic in-house. The conversation engine — running structured interviews at scale, probing follow-ups, synthesizing themes — is a deeply specialized capability and rebuilding it eats engineering quarters. The routing logic — what triggers what conversation, who gets paged, how synthesized themes flow into your CRM and PLG product — is specific to your data shape and should live in your stack. Most failed retention programs fail at the routing layer, not the conversation layer.

How does this playbook differ from a general churn reduction guide?

This playbook differs from a general churn reduction guide because every lever is built around SaaS-specific economics: NDR/GRR math, the renewal motion, expansion gating, and the contracted-revenue compounding effect. A B2C subscription churn guide can talk about win-back campaigns and pricing tiers — those don't move the needle for a $200K ACV B2B SaaS contract with a one-year auto-renew clause and a procurement review at T-60. For the cross-segment fundamentals that apply outside SaaS, the general 2026 churn reduction guide is the right starting point.

Bringing It Together

The teams that reduce customer churn in SaaS in 2026 are the teams that stop treating churn as a CS-team problem and start treating it as a four-lever operational program: onboarding intervention, health-score-triggered conversations, renewal-motion redesign, and expansion gating. Each lever depends on closing the gap between telemetry signal and customer context — and that gap closes through structured conversations, not through more dashboards or larger CSM teams. Net dollar retention is now a board-level metric, expansion gating is now a finance discipline, and customer interviews are now an operational instrument that runs continuously rather than a quarterly project. The SaaS churn playbook that compounded value from 2020 to 2023 — survey + dashboard + reactive CSM — has stopped working at the multiples it used to.

Perspective AI is built for the diagnostic loop at the center of this playbook. Talk to our AI interviewer agent to see how telemetry-triggered customer conversations route signal into action, or explore use cases for CX and product teams to see how leading SaaS companies have wired the four levers into their renewal motion. Built for CX teams and product teams running retention as a company-wide program, not a quarterly fire drill.