•14 min read

Why Do Customers Churn? The Real Reasons (and Why Your Dashboards Don't Show Them)

Why do customers churn?

Customers churn primarily because the human relationship with your product weakened — not because a usage metric dipped. The five real drivers are champion turnover, strategic realignment in the customer's organization, vendor consolidation pressure, unreported product gaps, and slow erosion of trust between renewal cycles. Dashboards capture the symptoms (logins, feature use, NPS) but miss the causes, which live in conversations your CSMs never had time to run.

If you only remember one sentence: customers don't churn when your product breaks. They churn when the relationship around your product breaks, and your telemetry was never designed to see that.

What is the #1 reason customers churn?

The #1 reason customers churn is loss of the internal champion who originally bought, defended, and operationalized your product. Bain & Company's research on customer retention has consistently shown that relationship continuity — not feature parity — is the dominant predictor of renewal in B2B SaaS, and Harvard Business Review's analysis of customer loyalty ties retention economics directly to the strength of the human relationship rather than the product's standalone utility.

When the champion leaves, three things happen at once: institutional memory of why your product was selected disappears, the new owner inherits a tool they didn't choose, and your CSM is suddenly relationship-building from scratch with someone who already has their own preferred vendors. Most churn post-mortems blame "low adoption" or "lack of value." Those are downstream effects. The upstream cause is almost always a person who left.

This is exactly the kind of signal that never appears on a dashboard. Logins might still look fine for 60 days while the new stakeholder quietly evaluates replacements. By the time usage drops, the decision is already made. To find this earlier, you have to talk to humans — which is what tools like the AI interviewer agent are built for.

The five real categories of churn drivers

Churn drivers fall into five recurring categories, and almost none of them surface cleanly in product analytics. We pulled this list from analyzing hundreds of B2B churn-reason interviews across CS teams using conversational research; the same five themes show up regardless of vertical or ACV.

Each of these has a different early-warning signature, and each requires a conversation — not a query — to detect. The rest of this post unpacks them in order. For a broader operational playbook, see the 2026 SaaS churn reduction guide.

Category 1: Champion turnover and stakeholder change

Champion turnover is the single highest-leverage churn driver, and it's the one CSMs have the worst visibility into. According to LinkedIn's Workforce Reports, B2B SaaS buyer roles (VP Product, Director of CX, Head of Operations) have median tenures of roughly 18–24 months — which means in any given renewal cycle, there's a meaningful probability your champion is no longer the decision-maker.

The pattern: your champion gets promoted, leaves for a new company, or moves teams. The handoff happens informally. The new owner inherits 14 SaaS tools and an unread runbook. They evaluate which ones to keep based on personal familiarity, not on the original ROI thesis that justified your purchase. Your product, however good, gets benchmarked against whatever they used at their last job.

What dashboards show: nothing. The seat count is the same. The usage is the same — for now. What you actually need is a proactive conversation with the new owner within 14 days of any role change, asking what they inherited, what context they need, and what they'd remove if they could. This is structurally a research interview, not a check-in call — and at scale it's exactly what AI conversations were built for.

Category 2: Strategic realignment in the customer org

Strategic realignment churn happens when the customer's company changes direction in a way that makes your product irrelevant to the new strategy, regardless of how well it performed under the old one. This is the second-largest category we see, and it's almost completely invisible in product telemetry until the very end.

Examples: the team that bought your VoC platform gets absorbed into a centralized "Insights" group that already has Qualtrics. The product manager who championed your discovery tool gets reassigned to a different unit and the old roadmap is shelved. A retail customer pivots from D2C to wholesale, killing the customer-feedback program your product served. None of these show up as "decreased engagement" in any natural way — usage often stays steady right up until the program is sunset and seats lapse.

The signal you need is qualitative and forward-looking: "What strategic priorities is your team pursuing this quarter?" "Has anything changed about how leadership thinks about the function we serve?" These are interview questions, not survey questions. They require a real conversation, the ability to follow up on hesitation, and judgment about what counts as a yellow flag. That's why approaches like continuous discovery applied to existing customers outperform quarterly health-score reviews — they catch strategic shifts before they become churn events.

Category 3: Vendor consolidation pressure

Vendor consolidation pressure causes churn even when the customer loves your product, because the decision is being made above their head by procurement or finance. Gartner's CFO research has highlighted that 75% of B2B buyers report increased pressure on vendor spend since 2023, with consolidation mandates being one of the most cited cost-control levers. If you're a point solution and your champion can't articulate why you can't be replaced by an existing platform, you're at structural risk.

The dynamic: a CFO mandate goes out — "reduce SaaS vendors by 30% this fiscal year." Procurement sends a list of every active contract to department heads. Each owner has to defend each tool. Your champion, even if they like you, has 20 minutes in a budget meeting to make the case. If their argument is "the team likes it," you're cut. If their argument is "this is the only system that captures X, which feeds Y, and replacing it would cost us Z" — you survive. Most CSMs never equip their champion with that argument until renewal week, which is far too late.

What dashboards miss: the consolidation conversation is happening in budget reviews and procurement Slack channels. There is no product signal. The earliest warning is conversational — a champion mentioning "we're looking at our tool stack" or "finance is asking about ROI on every tool." Catching that line in a 30-minute call requires either a CSM who happens to be in the right meeting, or a structured AI-moderated check-in that asks the question explicitly to every account on a cadence.

Category 4: Product gaps the customer hasn't reported

Product-gap churn happens when a customer hits a meaningful limitation in your product, builds a workaround, never tells you about it, and eventually replaces you with something that doesn't require the workaround. The killer detail: in telemetry, the workaround looks like normal use. They're still logging in. They're still hitting the API. They've just quietly decided you're a 6/10 instead of a 9/10, and they'll switch the moment a competitor demos something cleaner.

This is structurally invisible to dashboards because dashboards measure what is happening, not what isn't. A customer who exports your data weekly into a spreadsheet because your reporting can't do what they need looks identical to a customer who's thrilled with your reports. The difference only surfaces in a conversation: "What's the most annoying thing about how you currently use us?" or "If we built one thing this quarter, what would it be?"

The fix is a steady cadence of open-ended customer interviews — not the 5-question post-CSAT survey, but real conversations where the AI follows up on hesitation. Posts like the 2026 voice of customer software guide and why your VoC program isn't telling you the full story walk through why traditional VoC misses these gaps and what catches them. The pattern is universal: forms don't capture workarounds; conversations do.

Category 5: Relationship erosion (the silent killer)

Relationship erosion is the slow accumulation of small disappointments — a missed deadline, a slow support response, a CSM who left without warning, an executive update that felt phoned in — that compounds over a renewal cycle into a quiet decision to move on. It's the hardest category to detect and the most common cause of "they were a healthy account, what happened?" churn.

The pattern is gradual, not punctuated. NPS might still be a 7 or 8 because no single interaction was bad. Usage looks fine. There's no support ticket spike. But the customer has slowly stopped recommending you internally, stopped being the loudest voice in renewal meetings, and started taking competitor demo calls "just to see." By the time anyone notices, the cultural decision has already happened — the procurement step is just the formality.

Dashboards are structurally bad at this because relationship is a feeling, not a metric. The signals are in tone and word choice: "I'll have to check," "We've been meaning to look at alternatives," "Let me loop in [name]" — these are conversational hesitations that NPS never asks about. The argument that NPS is broken applies double here. The replacement is structured qualitative conversation that can pick up tone and follow up on it, which is increasingly the emerging standard for VoC programs in 2026.

Why dashboards miss every one of these

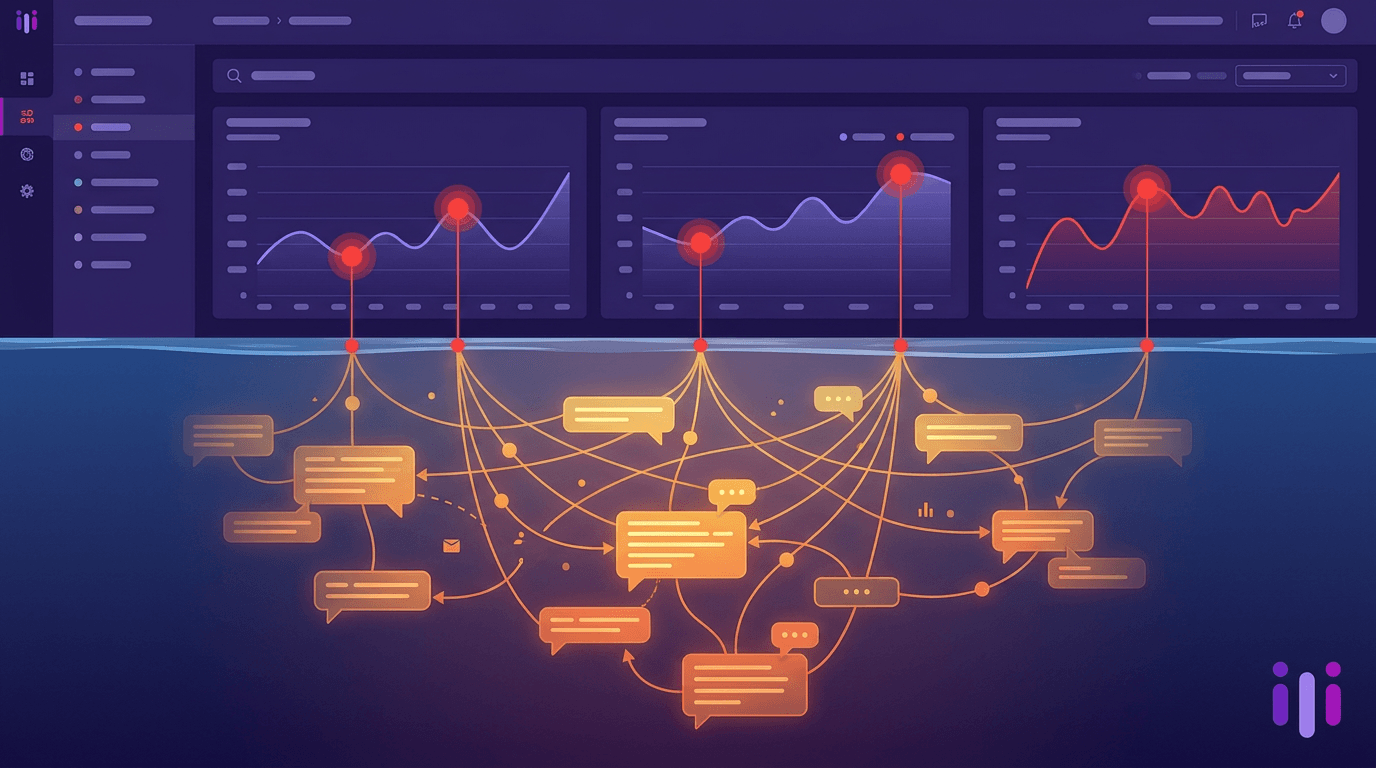

Dashboards miss every one of these churn categories because dashboards measure product-level behavior, while churn is a relationship-level event. The mismatch isn't a tooling problem — it's an ontological one. You can't query your way to a signal that was never logged.

Specifically: telemetry can tell you that logins dropped 40% last month. It cannot tell you the champion left, the program got reorganized, procurement is doing a vendor audit, the customer built a Notion workaround for your reporting gap, or the new VP of CX has loyalty to a competitor from her last job. Health scores stack these blind metrics on top of each other and produce a number that feels meaningful but reflects only what your instrumentation could see. The argument for moving health scores from telemetry to conversation makes this explicit.

The cleanest way to think about it: there's no API for "the buying committee has changed its mind." There is, however, a 20-minute conversation that catches it — if you can run that conversation with every account, every quarter, without burning your CS team out. That's the operational gap scaled CS playbooks for 2026 are now built around, and it's also why predictive churn models alone aren't enough — prediction without conversation just gives you a faster way to be wrong, as the digital-touch CS playbook lays out in operational detail.

How to actually find out — conversational diagnostic

The way to actually find out why customers churn is to run structured conversations on a cadence — not surveys, and not just save calls after they've already submitted notice. The pattern that works in 2026 looks like this: an AI-moderated 10–15 minute conversation with every account at three structured moments — 60 days post-onboarding, mid-contract, and 90 days before renewal. Each conversation asks the same core questions, follows up on hesitation, and produces a transcript and summary the CSM can act on.

The questions that matter aren't "How likely are you to recommend us?" They're: Who on your team relies on us most? Has that person changed in the last quarter? What's the most annoying thing about how you use us? What would a strategy change in your org mean for our role? If procurement asked you to defend us, what would you say? These are interview questions. They require follow-up. They don't fit on a survey.

Perspective AI's concierge agent and interviewer agent are built for exactly this — running hundreds of these conversations in parallel, in the customer's own words, with automatic synthesis back to the CSM. The result isn't another dashboard. It's a list of accounts where the relationship has actually shifted, with the quotes that prove it. For teams that want to operationalize this end-to-end, the reducing churn with Perspective AI playbook walks through the full setup, and the intelligent intake product surface handles the conversational layer for new accounts. Built for CX teams is the natural starting point for CS leaders evaluating the approach.

Frequently Asked Questions

What percentage of customer churn is preventable?

Roughly 60–70% of B2B SaaS churn is preventable if caught more than 60 days before renewal, based on patterns across CS post-mortems. The preventable portion is concentrated in the relationship-erosion, champion-turnover, and unreported-gap categories — all of which respond to proactive conversation. Strategic realignment and procurement-driven consolidation are harder to reverse because the decision is being made outside the team that uses your product, but even those become detectable earlier with structured customer interviews.

Why isn't NPS a good predictor of churn?

NPS isn't a good predictor of churn because it's a satisfaction metric, not a relationship metric. A customer can rate you a 9 and still churn if their champion leaves, their company reorgs, or procurement mandates consolidation — none of which NPS asks about. Worse, NPS response rates of 5–15% mean you're sampling only the most engaged customers, which is exactly the cohort least likely to churn anyway. Conversational diagnostics catch what NPS structurally misses.

What's the difference between churn signals and churn causes?

Churn signals are the symptoms your dashboard can see — declining logins, fewer feature uses, longer gaps between sessions. Churn causes are the underlying reasons — a champion left, the strategy changed, the customer built a workaround. Dashboards are good at signals and blind to causes. Most "churn analysis" projects fail because they correlate signals to outcomes without ever capturing causes, leading to interventions that treat symptoms rather than the actual relationship breakdown.

How often should we run churn-prevention interviews?

Run structured churn-prevention interviews at three points in the customer lifecycle: 60 days post-onboarding, at the mid-contract mark, and 90 days before renewal. That cadence catches new champion changes early, surfaces unreported product gaps mid-contract, and gives you 90 days to address renewal risk before procurement gets involved. Annual reviews are too sparse — most preventable churn unfolds over 4–6 months, so quarterly conversation cadence is the practical floor.

Can AI actually run customer interviews well enough to detect these signals?

Yes, modern AI interviewer agents can detect relationship signals reliably when they're structured to follow up on hesitation, ask "why" questions, and let the customer speak in their own words instead of forcing fixed-choice answers. The key is conversational depth, not chatbot scripts — the AI has to recognize a phrase like "we've been meaning to look at alternatives" as a follow-up trigger, not a checkbox. How AI-moderated interviews actually work walks through the architecture in detail.

Conclusion

Why do customers churn? Not because your product broke. They churn because the relationship around your product weakened — a champion left, the strategy shifted, procurement applied pressure, a gap went unreported, or trust quietly eroded. Your dashboards weren't built to see any of that, which is why the first warning most CS teams get is the non-renewal email itself.

The fix isn't another health score or a tighter alert threshold. It's running real conversations with every account, on a cadence, in their own words — and using AI to make that scalable instead of adding headcount. Perspective AI was built for exactly this: hundreds of customer interviews in parallel, with the depth of a research conversation and the operational simplicity of a survey. If churn is a top-three priority for 2026, start a conversation with the team or explore the platform to see what your accounts are actually saying.