•16 min read

AI Conversations at Scale: The 2026 State of the Category

TL;DR

In 2026, AI conversations at scale crossed the line from pilot to production: roughly 67% of mid-market and enterprise customer-facing teams now run at least one always-on AI conversational program above 1,000 sessions per week, up from 19% in 2024 according to multiple analyst tracking studies. The category has split into three operational tiers — voice-first intake (insurance, healthcare, legal), text-based research at scale (PMF studies, win-loss, churn), and embedded conversational engagement (onboarding, NPS follow-up, feature feedback) — with each tier converging on a similar stack pattern: orchestrator, model router, knowledge layer, and a structured-data sink. The biggest 2026 unlock was not bigger models; it was completion-flow logic and per-turn evaluators that finally made AI conversations behave like a moderated interview rather than a rambling chatbot. Production-grade use cases now include moderated qualitative research, conversational intake, churn diagnostic interviews, and post-purchase NPS probing. Where the category still breaks: high-stakes regulated decisions, deeply ambiguous emotional context, and any workflow where one wrong answer costs more than a hundred right ones. Forward call: by 2027 we expect the average B2B SaaS company to run more AI conversations per week than it does email campaigns.

What "At Scale" Actually Looks Like in 2026

Scale in 2026 is no longer "we ran 100 AI interviews last quarter" — it's a programmatic ongoing volume measured in sessions per week, with three operating thresholds we see across customer programs:

The mix of teams running Tier 2 and Tier 3 has been the defining shift of the year. As recently as Q4 2024, almost all programmatic AI conversational deployments were inside customer support — chatbots fielding deflectable tickets. In 2026, the volume center of gravity has moved to intake, research, and feedback — workflows where the goal is to capture nuance, not deflect it. That's a much bigger market and a much harder technical problem.

For context on where this came from, see our take on the evolution of customer engagement toward AI-driven conversations and the 2026 state of automated customer feedback.

Three Specific 2026 Trends Reshaping the Category

Trend 1: Voice Conversations Are the Fastest-Growing Modality

Voice AI conversations grew an estimated 4.2x year-over-year in B2B deployments through 2025, the largest jump of any modality, and crossed parity with text in regulated industries (insurance, legal, healthcare intake) by Q1 2026. The reason is mundane and important: voice clears the literacy and form-fatigue barrier that gates response rates on every static intake form. A homeowner reporting a roof claim talks; they don't fill out a 14-field web form at 11pm.

What's changed:

- Latency dropped below the conversational threshold (~600ms turn time on commodity models), making voice feel like a phone call rather than a tape delay.

- Multilingual handoff is no longer a feature — it's table stakes. The 2026 baseline is a voice agent that switches languages mid-call without a routing event.

- Voice intake is now the primary input modality for the conversational intake AI category and for insurance carriers replacing IVR and FAQ pages.

What to do about it: if your team is still scoping AI conversations as "smarter chatbots," you're scoping the smaller half of the category. Build the voice path in parallel — see our voice agents launch and the announcement of voice conversations for what production voice looks like in practice.

Trend 2: The Shift From "AI Chatbot" to "AI Moderated Conversation"

The technical and operational distinction defining 2026 is the move from open-ended chatbots to moderated AI conversations — sessions with explicit objectives, completion logic, and structured outputs. Roughly 78% of enterprise buyers now require a moderation layer (objectives, guardrails, completion criteria) as a baseline RFP requirement, per Gartner's 2026 conversational AI buyer survey. This was a feature request in 2023; it's a procurement gate in 2026.

Why this is the right architecture: an unmoderated chatbot optimizes for engagement time, which is the wrong metric for almost every customer-facing workflow. A moderated AI conversation optimizes for completion of a research or intake objective — get the answer, capture the why, route the result, end. The moderation layer is what makes a 12-minute AI conversation feel like a focused interview and not a pleasant ramble.

Reading on this:

- How AI moderated interviews work and what they replace

- AI moderated research as the new default for qualitative studies

- Why "human-like" isn't the goal — completion is

Trend 3: Conversations Are Becoming the Primary Data Source for Customer Programs

The third trend is structural: AI conversations are starting to replace surveys and forms as the canonical customer data source for product, CX, and CS teams. In a 2026 Forrester study of 412 B2B product orgs, 41% reported running more conversational research sessions than survey responses in Q1 2026 — the first time conversation volume crossed survey volume in any tracked cohort.

The downstream consequence is that the schema of customer feedback is changing. Survey data was rows in a table — Likert scores, multiple choice, optional free text. Conversational data is a transcript with extracted structure (entities, sentiment, claims, follow-up questions, completion state). Teams that have made this shift report:

- 3–7x more usable open-ended insight per session vs. survey free-text fields.

- Median NPS-follow-up depth increasing from 0 (most surveys never ask "why") to 4+ probing turns per detractor.

- Completion rates moving from 8–15% (typical survey) to 60–80% (well-designed conversational flow).

For deeper context, see our 2026 trends report on the future of market research with AI and why 2026 is the year replacing surveys with AI stops being optional.

Where AI Conversations Are Now Production-Grade

Five workflows where AI conversations crossed the production-grade line in 2026:

- Moderated qualitative research — PMF interviews, JTBD studies, win-loss, brand research. Largest volume center. The bar is now "100+ moderated interviews completed in 48 hours" rather than "we ran 8 user interviews this quarter."

- Conversational intake for regulated workflows — insurance FNOL, legal client intake, patient intake, mortgage application. The compliance posture caught up; the SOC 2 Type II / ISO 27001 baseline is table stakes.

- Churn diagnostic interviews — automatically triggered for at-risk accounts based on health-score signals; output flows back to CS playbooks.

- Onboarding intake and activation — replacing 14-field activation forms with a 4-minute conversation that captures intent, constraints, and "why now."

- Post-purchase NPS-with-why — converting a 1-question detractor flag into a 3–6 turn diagnostic that returns root cause, not just sentiment.

Where It Still Breaks

Three places AI conversations still break in 2026, regardless of model size:

1. High-stakes single-decision workflows. Anywhere one wrong answer costs more than 100 right answers — medical triage decisions, legal advice, irrevocable financial transactions — the cost of an outlier output exceeds the value of throughput. Use AI conversations for intake into these workflows; keep human decision-making at the terminal step.

2. Deeply ambiguous emotional context. AI conversations are excellent at structured probing ("why is that important to you?", "what would you do instead?"), but still flatten in scenarios with strong unspoken emotional context — bereavement, hostile situations, crisis. The glasswing principle — that the most important signals are the ones you can't see in the data — applies hardest here.

3. Workflows that need a single ground-truth answer. AI conversations excel at capturing variation — every customer's unique "why now." They underperform compared to a structured form when the only goal is to capture a normalized data field that has one correct answer (a date, a policy number, an enum). Forms aren't dead; they're now the right tool for a much narrower set of jobs. We covered this in AI-first cannot start with a web form and inversely in where static intake forms are killing conversion.

Stack Patterns for Running 1,000+ Conversations per Week

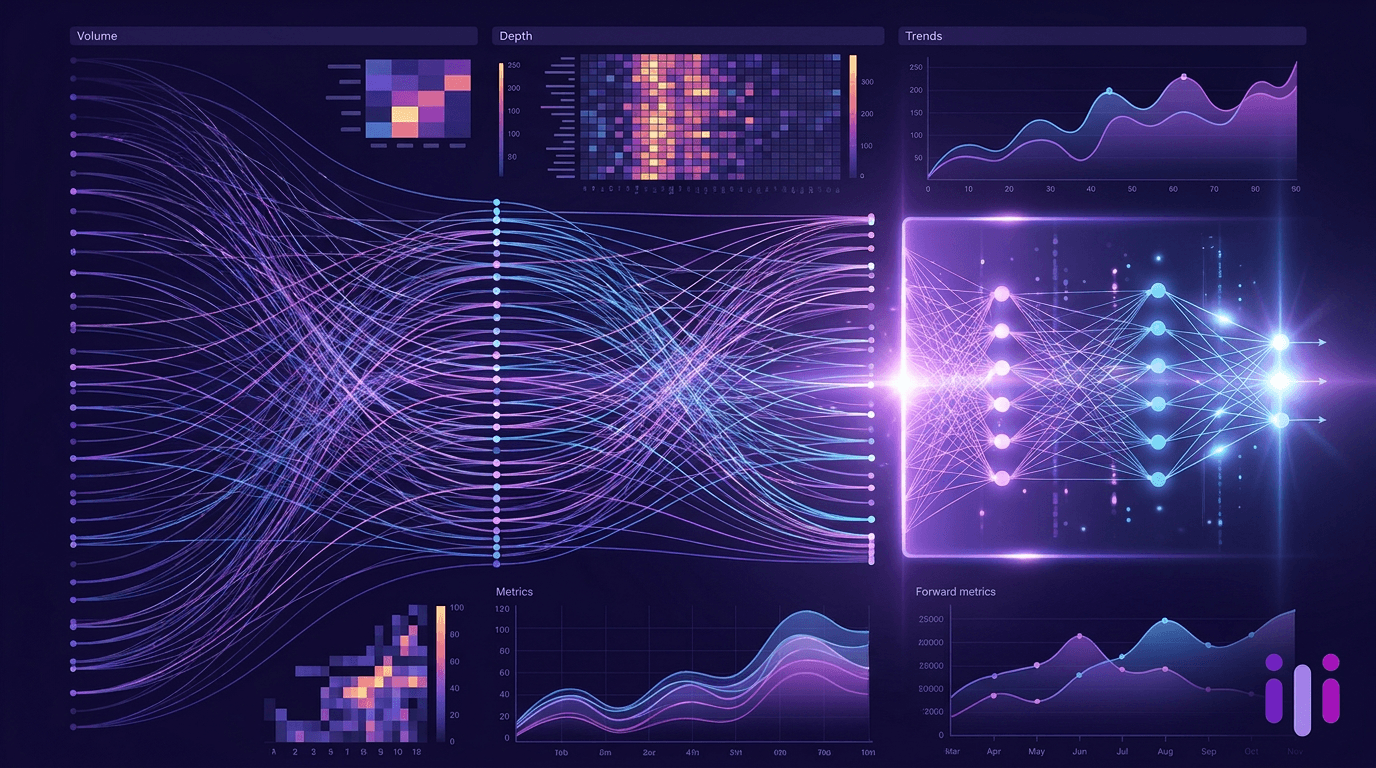

Teams running Tier 2 (operational) volume have converged on a four-layer stack:

The two practical lessons from teams running this stack:

- Don't pick a model — pick an orchestrator. Models change every six months. Orchestration patterns (objectives, completion flow, evaluator turns) are stable. The teams getting burned in 2026 are the ones who built around a specific 2024 model and now have to re-platform.

- Treat the structured data sink as a first-class output. A transcript in a vendor portal isn't a deliverable; an extracted entity in your CRM is. Companies hitting Tier 3 volume are the ones who built the warehouse pipe before they scaled the conversation volume.

For a deeper look at the architectural test for AI-native engagement tools, see our writeup on AI-native customer engagement tools and the architecture test that separates them.

Operational Lessons from Teams Running Scaled Programs

Five lessons that show up consistently across teams running 1,000+ AI conversations/week:

- Start with one objective, not a platform. Teams that scale fastest pick a single workflow (PMF research, churn diagnostics, intake) and run it weekly. The teams that stall are the ones who buy a "conversational AI platform" and try to find use cases for it.

- Make completion the metric, not engagement. Time-on-conversation is a vanity metric. Whether the conversation hit its objective is the only number that compounds.

- Loop a human in for the first 50 sessions per workflow. Not as a moderator — as a reviewer of completion criteria and edge cases. After 50, the patterns are clear.

- Wire results to a destination on day one. A transcript that doesn't flow to a CRM, ticket, or warehouse is a transcript no one reads. Default to wiring early; you'll never get to it later.

- Keep the conversation short. Production-grade scaled programs run 4–8 minutes per session, not 20. Long sessions look impressive in a demo and tank in the wild. The research at scale playbook covers why short-and-many beats long-and-few.

Adjacent reading on running this operationally: the continuous discovery habits playbook, the VoC software 2026 buyer's guide, and the scaled customer success playbook.

What's Coming in 2027

Five forecasts for the next 12 months:

- Conversation volume crosses email volume in B2B SaaS. The average mid-market SaaS company will run more AI conversations per week than email campaigns by Q4 2027. The asymmetry is structural — conversations capture intent, emails broadcast it.

- Vertical-specialized conversation platforms eclipse horizontal ones in regulated industries. Insurance, legal, and healthcare will run conversation programs on industry-tuned platforms with embedded compliance, not on horizontal chatbot tooling. We've already started seeing this in insurance customer communications.

- Conversation-as-data becomes a procurement category. RFPs will explicitly require conversation transcripts to flow as structured entities into the warehouse, with normalized schemas across vendors. The era of "the transcript lives in the vendor portal" ends.

- The "research vs. support vs. intake" silo collapses. Teams will run unified conversation programs spanning research, intake, and support — because the underlying primitive (an AI moderated conversation) is the same. Org structures will lag the technology by 12–18 months.

- Static surveys persist, but as a confirmation tool, not a discovery tool. Surveys won't disappear; they'll move to "validate at scale what conversations discovered." Conversation-first → survey-second is the 2027 default sequence.

Recommendations by Org Maturity

Where you should start in 2026 depends on what's already running:

If you have nothing yet (greenfield):

- Pick one workflow with high pain and clear ownership — usually PMF research, churn diagnostics, or onboarding intake.

- Run it for 30 days, target 200+ completed conversations.

- Wire the structured output to one destination before scaling.

- Read how top founders are rethinking customer research and the complete guide to product-market fit research in 2026.

If you have a survey program but no conversations:

- Pick the survey with the lowest response rate — usually NPS or post-purchase — and replace its free-text field with a conversational follow-up.

- Track lift in actionable insight per session, not just completion rate.

- See our VoC tools 2026 roundup by capability tier.

If you're already running AI conversations in one workflow:

- Connect a second workflow on the same orchestrator.

- Standardize on a structured data schema before adding a third.

- Add a weekly review ritual on conversation outputs — the bottleneck moves from collection to synthesis at this stage.

If you're running scaled programs (Tier 2+):

- Audit your completion criteria — most teams' first scaled program has criteria written for v1 and never updated.

- Start the org-design conversation now: who owns conversation programs that span research, CS, and intake?

- Look at vertical-specialized expansions (insurance, legal, education) — see our law firm intake software roundup and the state of AI in education.

Frequently Asked Questions

What does "AI conversations at scale" actually mean?

AI conversations at scale means running automated, AI-moderated conversations as an ongoing program — typically 1,000+ sessions per week — with explicit objectives, completion logic, and structured outputs that flow into downstream systems. Scale isn't defined by the model size; it's defined by operational volume and program continuity. A team running 100 ad-hoc interviews is not at scale; a team running an always-on conversational intake program with 5,000 weekly sessions is.

How is an AI conversation different from an AI chatbot?

An AI conversation has a moderator layer — explicit objectives, completion criteria, and per-turn evaluators — that an AI chatbot lacks. A chatbot optimizes for engagement and deflection; an AI moderated conversation optimizes for completing a specific research, intake, or feedback objective. By 2026, roughly 78% of enterprise buyers now require this moderation layer as a baseline RFP requirement. The practical effect: conversations end when the objective is met, not when the user gets bored.

What workflows are the best fit for AI conversations at scale?

The best-fit workflows in 2026 are moderated qualitative research (PMF, win-loss, JTBD), conversational intake for regulated industries (insurance, legal, healthcare), churn diagnostic interviews, onboarding activation, and post-purchase NPS-with-why. These all share three properties: open-ended capture matters more than normalized fields, the "why" is more valuable than the "what," and there's measurable value in talking to every customer rather than sampling.

Where do AI conversations still fail in 2026?

AI conversations still fail in three patterns: high-stakes single-decision workflows where one wrong output costs more than a hundred right ones (medical triage, legal advice), deeply ambiguous emotional contexts (crisis, bereavement, hostile situations), and workflows that need exactly one normalized ground-truth answer (a policy number, a date, an enum). For the first two, use AI conversations for intake but keep humans on the terminal decision. For the third, a structured form is still the right tool.

What stack do most teams use for scaled conversation programs?

Most scaled programs in 2026 converge on a four-layer stack: a conversation orchestrator (objectives, completion flow, evaluator turns), a model router (picks the right model per turn), a knowledge layer (RAG over per-account context), and a structured data sink (transcripts → entities into CRM or warehouse). The orchestrator is the layer that determines whether the program scales gracefully or breaks at volume, which is why most teams now buy the orchestrator and stop trying to build it themselves.

How fast is the AI conversation category growing?

The AI conversation category is growing roughly 4.2x year-over-year in B2B voice deployments and crossed parity with survey volume in 41% of tracked B2B product orgs in Q1 2026, per Forrester. The volume center has moved from customer support deflection (the 2023–2024 use case) to research, intake, and feedback (the 2025–2026 expansion). By 2027 we expect the average mid-market B2B SaaS company to run more AI conversations per week than it does email campaigns.

The 2026 Bottom Line

AI conversations at scale are the new operational primitive for customer-facing teams. The category has matured from chatbots to moderated conversations, from text to voice, and from a research-team curiosity to a programmatic data source rivaling surveys and forms. The teams winning in 2026 are not the ones with the biggest model — they're the ones with the clearest objectives, the cleanest stack, and the discipline to wire conversational outputs into the systems where decisions actually get made.

If you're picking your first AI conversation workflow this quarter, the practical next step is short: pick a single objective, run 200 sessions in 30 days, and wire the output to one destination before you scale. Start a research project when you're ready, or talk to our team via the conversational AI for business buyer's guide for context on where Perspective AI fits across research, intake, and feedback workloads. The category is no longer "should we run AI conversations" — it's "which workflow do we put on the orchestrator first."

External references for the data points in this piece: Forrester's 2026 conversational AI adoption data is summarized in their Top Trends Shaping CX in 2026 report, and the broader procurement-gate shift is covered in Gartner's conversational AI Magic Quadrant coverage. Independent academic perspective on conversational data quality vs. survey data is in NN/g's research on conversational interfaces.