•16 min read

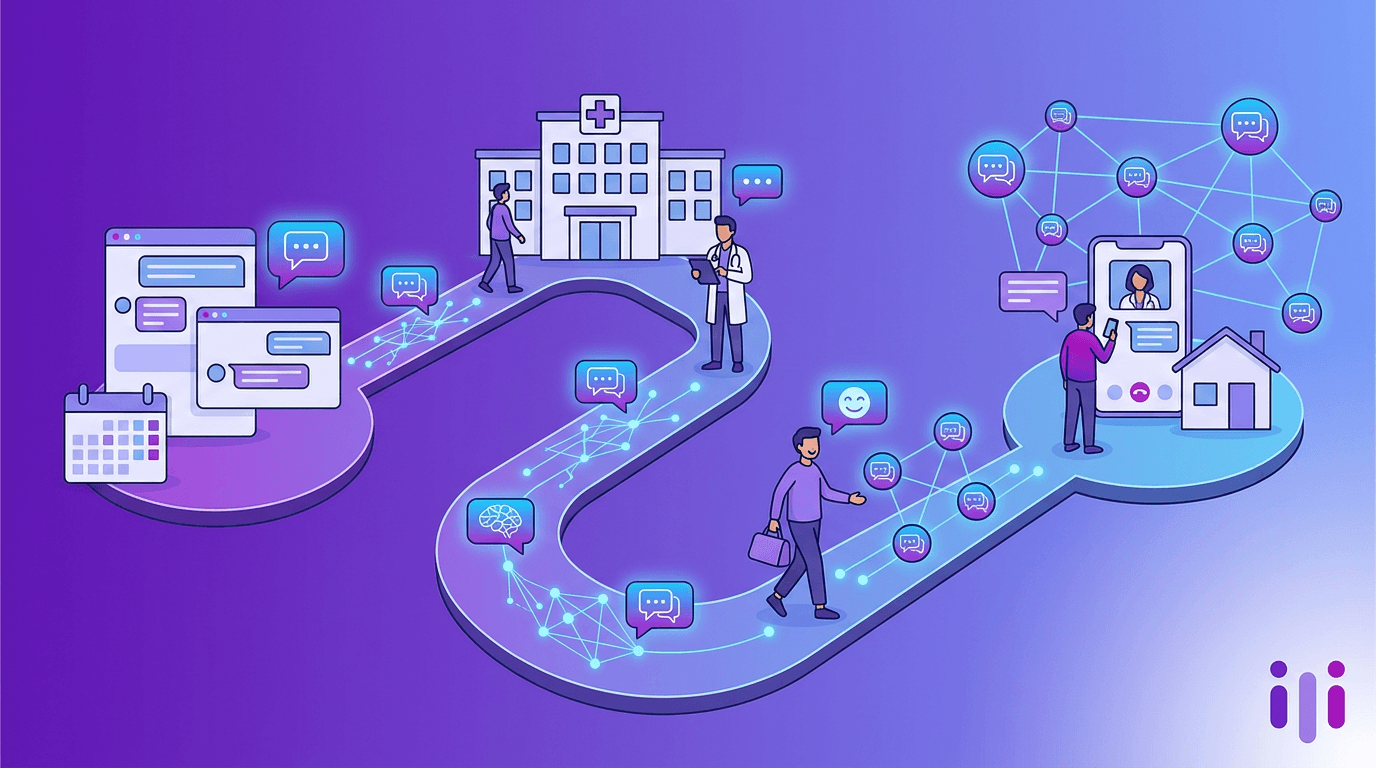

Cleveland Clinic AI Strategy: Conversational Care from First Touch to Discharge

TL;DR

Cleveland Clinic has spent more than a decade building one of the most public AI playbooks in US healthcare, anchored by an early IBM Watson partnership in 2014, a deep Epic EHR backbone, a multi-year generative AI pact with Microsoft and G42 announced in 2023, and a quantum-and-AI biomedical research alliance with IBM that includes the on-site IBM Quantum System One installed in 2023. Its current strategy spans the full patient journey: AI-enabled scheduling and digital triage on the front end, ambient clinical documentation and decision support inside the visit, and a still-thin layer of conversational technology on the back end where discharge instructions and follow-up live. The under-deployed lane is the post-visit conversation — the moment when readmission risk, medication confusion, and patient experience scores are actually decided. Cleveland Clinic's playbook is the bellwether for academic medical centers and integrated delivery networks because it shows where AI investment has compounded and where the patient-experience layer still depends on portals, forms, and phone tag. For health systems benchmarking against it, the lesson is not "buy more clinical AI" — it's "extend the conversation past discharge."

Why Cleveland Clinic is the bellwether for US health-system AI

Cleveland Clinic is the bellwether because it has been publicly experimenting with enterprise AI longer than almost any peer, and because its scale forces every pilot to confront real operational constraints. The system operates 23 hospitals and more than 275 outpatient facilities across Ohio, Florida, Nevada, Canada, the UK, and Abu Dhabi, employs over 81,000 caregivers, and handled roughly 13.7 million outpatient visits in its most recent reporting year. That footprint means anything Cleveland Clinic adopts has to clear privacy, compliance, multi-state licensure, and clinician workflow tests that smaller systems can sidestep.

It also publishes more than most. Cleveland Clinic releases an annual "Top 10 Medical Innovations" list, runs a venture arm (Cleveland Clinic Innovations), and has been a named launch partner for several of the most-watched healthcare AI initiatives of the last decade. That public posture makes it possible to map their actual journey — from the early IBM Watson Genomics work at Lerner College of Medicine, through the Epic standardization push, to the recent generative AI partnerships — without relying on rumor or vendor case studies. For CIOs, CMIOs, and patient-experience leaders comparing notes, Cleveland Clinic functions as the reference architecture even when peers diverge from its specific choices.

Cleveland Clinic's AI partnership history: from Watson to generative AI

Cleveland Clinic's AI history is a layered partnership story rather than a single vendor bet. Four threads matter most for understanding where the system is today.

IBM Watson (2014–present). Cleveland Clinic was an early collaborator with IBM Watson, working with IBM on Watson for Genomics applied to oncology and on educational uses of Watson at the Lerner College of Medicine. The partnership has evolved well beyond the original Watson Health frame: in 2021 Cleveland Clinic and IBM announced a 10-year discovery accelerator covering quantum, AI, and high-performance computing for biomedical research, and in 2023 IBM installed an on-site IBM Quantum System One — the first deployed at a healthcare provider — on Cleveland Clinic's main campus.

Epic (EHR backbone). Cleveland Clinic standardized its enterprise EHR on Epic, completing major rollouts at its Florida and London locations in recent years. That matters because nearly every "AI in the workflow" initiative — ambient documentation, in-basket triage, predictive readmission scoring — rides on Epic's data model and SMART on FHIR endpoints. The choice of Epic is implicitly a choice to bias toward Epic-native AI features (MyChart messaging triage, ambient scribes integrated through Epic's partner program, generative replies in the in-basket).

Microsoft and G42 (generative AI, 2023). In 2023 Cleveland Clinic, G42, and Microsoft announced a multi-year strategic collaboration to use Microsoft cloud and AI technologies — including Azure OpenAI Service — for biomedical research and patient-care applications. This is the partnership that brought generative AI into the strategic conversation alongside the older, narrower Watson and Epic work.

Smaller, specialty integrations. Cleveland Clinic has piloted ambient clinical documentation tools (Nuance DAX Copilot is the Microsoft-owned offering most relevant to health systems running on Microsoft cloud and Epic), in-house diagnostic ML in cardiology and ophthalmology, and risk-stratification models for heart failure readmission and sepsis.

The pattern across all four threads: Cleveland Clinic has invested heavily in clinical AI — diagnosis, documentation, discovery — and more cautiously in experience AI, the layer that touches patients directly in their own words. The patient-journey breakdown below explains why.

Pre-visit: appointment scheduling, triage, and intake

Pre-visit AI at Cleveland Clinic mostly lives inside MyChart and the Cleveland Clinic mobile app, with selective phone-channel automation layered on top. Patients can search for appointments, complete eCheck-in, and message care teams asynchronously. Symptom-checker style triage and conversational scheduling have been piloted but are not yet the default front door across all service lines.

That front-door shape is typical of large academic medical centers and is also where the biggest patient-experience friction lives. Three pre-visit problems show up repeatedly:

- Scheduling is still phone-and-portal-bound for non-routine care. Specialty referrals, second opinions, and complex pre-op intake often drop into call queues or static online forms, even when the underlying scheduling rules could be expressed as a conversation.

- Intake forms reproduce the clipboard digitally. A PDF or web form that asks for medication lists, allergies, family history, and reason-for-visit is structurally a clipboard with a different bezel — it still flattens patients into checkboxes and depends on them volunteering the right context.

- Triage is rules-based, not conversational. Most digital symptom checkers used by health systems are decision-tree engines. They route, but they don't probe. The richest pre-visit information — "I'm not sure if this is the same pain as last year" — gets lost.

This is exactly the gap a conversational patient intake layer is built to close. Health systems that have replaced PDF intake with conversational AI report richer pre-visit context, fewer no-shows, and shorter rooming times. The same mechanic Mayo Clinic is testing in its patient-intake redesign and that consumer-tech primary-care brands like One Medical have been building toward is the same mechanic Cleveland Clinic could deploy on top of its Epic/Microsoft stack with relatively little new infrastructure: replace the clipboard with a conversation.

In-visit: ambient documentation and clinician AI support

Inside the visit, Cleveland Clinic's AI investments are focused on relieving clinician documentation burden and supporting decision-making, not on changing what the patient experiences in the room. The two strongest threads are ambient clinical documentation and decision-support models.

Ambient documentation. Ambient AI scribes — tools that listen to the visit and draft a structured clinical note for clinician review — have become the most-deployed generative AI use case in US health systems over the last 18 months. Cleveland Clinic's Microsoft and Epic alignment positions it well for Nuance DAX Copilot or comparable Epic-integrated ambient solutions. The American Medical Association has published survey data showing physician burnout remains a top concern and that documentation load is a leading driver, which is why ambient scribes have moved from pilot to enterprise rollout faster than almost any other clinical AI category.

Decision support. Cleveland Clinic researchers have published peer-reviewed work on ML models for sepsis prediction, heart-failure readmission, and image-based diagnosis in cardiology and ophthalmology. These models live inside Epic's predictive framework or as standalone research tools rather than as patient-visible features.

What's missing from the in-visit layer is the patient voice. AI is making the clinician's job easier and the documentation cleaner, but the patient's account of their experience — their fears, their decision criteria, their understanding of the plan — is still mostly captured in the clinician's narrative summary or in a post-visit survey. Conversational AI used in-visit (or immediately around it) could close that loop, capturing the patient's framing in their own words and feeding it back into the care plan. This is closer to the conversational data-collection mechanic used in modern customer research than to anything most health systems have deployed at the bedside.

Discharge and follow-up: where conversational AI is most under-deployed

The post-visit window is where conversational AI is most under-deployed at Cleveland Clinic — and at almost every comparable health system. Discharge instructions, medication reconciliation, post-op check-ins, and 30-day readmission risk live in this window, and most of the touchpoints today are forms, portal messages, paper handouts, and outbound nurse calls.

Three reasons this gap matters more than the others:

- Readmissions are financially and clinically expensive. The Centers for Medicare and Medicaid Services penalizes hospitals through the Hospital Readmissions Reduction Program, which has tied a portion of inpatient reimbursement to risk-adjusted readmission rates for nearly a decade. Discharge that doesn't actually land — patients confused about meds, missing follow-ups, ignoring red-flag symptoms — directly drives that penalty.

- Patient-reported outcomes and experience scores are decided here. HCAHPS scores, the leading public measure of inpatient experience, are scored on patient surveys after discharge. Static surveys catch what's measurable on a 5-point scale and miss what's surfacing in the patient's own words.

- Self-managed chronic care depends on continued conversation. Heart failure, COPD, diabetes, and oncology survivorship all depend on the patient noticing and reporting subtle changes between visits. The instruments most systems use today — pulse-on-demand surveys, portal messages, periodic nurse outreach — are too thin to surface "it depends" answers reliably.

Conversational AI is the natural fit for this lane because the high-value moments are unstructured. "I'm not sure if this dose is the same as before" or "the pain is different than the doctor described" are precisely the kinds of messy, qualified inputs forms can't capture. A persistent, AI-driven conversation that follows up on discharge instructions, probes when answers are uncertain, and escalates when red-flag patterns emerge is structurally different from a survey or a chatbot.

This is the exact lane where Perspective AI's conversational research and intake layer is designed to operate — replacing static forms with AI conversations that follow up, probe, and capture the why behind a patient's response. The same mechanic that turns post-purchase survey fatigue into a continuous voice-of-customer program in SaaS turns post-discharge survey fatigue into an always-on patient-experience signal in health systems. Cleveland Clinic has the EHR, cloud, and partnership infrastructure to extend its journey there; the missing piece in most US health systems is not capability — it is treating discharge and follow-up as conversation, not as a transactional form.

Clinical AI vs experience AI: the two parallel tracks

Cleveland Clinic's AI portfolio runs on two parallel tracks — clinical AI and experience AI — and the two are funded, governed, and measured very differently. Understanding the gap between them is the single most useful frame for benchmarking any large health system's AI strategy.

Clinical AI gets hospital-system funding, IRB oversight, clinical-evidence review, and clinician change-management programs. Experience AI tends to get marketing budget, web-team ownership, and survey-vendor RFPs. The result is a system where the diagnosis is increasingly intelligent and the discharge instructions are still a printed PDF.

The fix isn't moving budget from clinical AI to experience AI — it's recognizing that the experience layer needs the same level of platform thinking. The same way conversational AI is replacing forms in customer research, it can replace forms in patient experience. The infrastructure is mostly already in place at Cleveland Clinic and any peer system running on Epic plus a major cloud — what's missing is the shift in posture from "deploy a portal" to "deploy a conversation."

Lessons for academic medical centers and integrated delivery networks

Academic medical centers and integrated delivery networks (IDNs) benchmarking against Cleveland Clinic should take five lessons from the playbook above.

1. Put the AI partnership strategy in writing. Cleveland Clinic's partnership stack — IBM, Microsoft, G42, Epic — is public, named, and durable. Smaller AMCs and IDNs often run AI as a portfolio of disconnected pilots. Writing the partnership stack down, naming the lanes, and renewing it annually beats the alternative.

2. Standardize the EHR before chasing the model. Cleveland Clinic's Epic standardization is what makes ambient documentation and predictive risk models deployable at scale. Health systems still running fragmented EHRs typically can't operationalize even excellent models. The platform decision dominates the model decision.

3. Build experience AI on top of cloud + EHR you already have. Most US health systems already pay for Microsoft or AWS cloud and have an Epic, Cerner, or Meditech EHR. The infrastructure for conversational patient intake, discharge follow-up, and longitudinal patient reporting is already there. The lift is mostly editorial — replace forms with conversations — not net-new platform spend.

4. Treat discharge and follow-up as the highest-leverage AI lane in 2026. Pre-visit and in-visit AI are crowded with vendors. Discharge and follow-up are crowded with surveys and outbound nurse calls. The vendor-density imbalance is the opportunity.

5. Measure experience AI on the metrics that already exist. HCAHPS, readmission rates, no-show rates, time-to-third-next-available, and net promoter score for primary care are already tracked. Conversational AI in the experience layer should be evaluated against these existing metrics, not against new vendor-defined KPIs.

What community hospitals can copy

Community hospitals can copy more of Cleveland Clinic's playbook than they tend to assume. The published partnership names, the on-site quantum installation, and the global footprint are not the copyable parts. The copyable parts are the posture and the sequencing.

Five practical moves:

- Pick one cloud + one EHR and stop bridging. The single biggest predictor of whether a community hospital can deploy modern AI is whether it has consolidated its EHR. Multi-EHR community systems should treat consolidation as the precondition for AI strategy, not as a parallel project.

- Adopt ambient documentation early. Ambient scribe deployment is the lowest-risk, highest-clinician-satisfaction generative AI use case available today. Community hospitals on Epic or Cerner with Microsoft or AWS cloud are within reach of enterprise pricing for these tools.

- Replace pre-visit PDFs with conversational intake. This is a contained, scope-limited change with measurable impact on rooming time and clinical context capture. Vendors built for conversational intake — including Perspective AI — can deploy on top of existing portals without replacing them.

- Run a discharge-conversation pilot in one service line. Heart failure and joint replacement are the two service lines where 30-day follow-up has the biggest readmission and HCAHPS impact. Pilot a conversational follow-up in one of those lines, measure against baseline, and expand.

- Centralize the AI partnership decision. Even at community-hospital scale, the partnership architecture should be a board- or CMIO-level decision, not a department-by-department vendor pick.

Frequently Asked Questions

What AI partnerships does Cleveland Clinic have?

Cleveland Clinic has publicly disclosed AI partnerships with IBM, Microsoft, G42, and Epic, among others. The IBM partnership dates to 2014 with Watson and was extended to a 10-year discovery accelerator covering quantum, AI, and high-performance computing in 2021. The Microsoft and G42 collaboration was announced in 2023 to apply Microsoft cloud and Azure OpenAI to biomedical research and patient-care applications. Epic is the EHR backbone that nearly all in-workflow AI features ride on top of.

Does Cleveland Clinic use generative AI?

Yes. Cleveland Clinic uses generative AI primarily through its 2023 collaboration with Microsoft and G42 covering Azure OpenAI Service applications, and through Epic-integrated tools such as ambient clinical documentation. Generative AI at Cleveland Clinic is concentrated in clinical documentation, biomedical research, and clinician productivity. Patient-facing generative AI is more selectively deployed and remains an active area of expansion across most US health systems including Cleveland Clinic.

Where is Cleveland Clinic's AI strategy weakest?

Cleveland Clinic's AI strategy is strongest on the clinical side — diagnosis, documentation, discovery — and weakest on the patient-experience layer of discharge and follow-up. Most discharge instructions, medication reconciliation, and post-op check-ins still rely on PDFs, portal messages, and outbound calls rather than conversational AI. This pattern is shared across nearly every large US health system, which makes it the highest-leverage area for new investment in 2026.

How does Cleveland Clinic's AI strategy compare to Mayo Clinic's?

Cleveland Clinic and Mayo Clinic are the two most-studied US health system AI programs. Mayo Clinic publishes more about clinical AI partnerships with Google Cloud and NVIDIA and tends to lead on AI-enabled imaging research, while Cleveland Clinic's profile leans heavier on the IBM, Microsoft, and Epic stack. Both are strongest on clinical AI and selectively deployed on patient-experience AI. The detailed comparison is covered in Mayo Clinic AI patient experience.

What can community hospitals copy from Cleveland Clinic's AI playbook?

Community hospitals can copy Cleveland Clinic's posture and sequencing even when they cannot match its scale. The highest-leverage moves are EHR consolidation onto a single platform, ambient documentation deployment, replacing PDF intake with conversational AI, piloting discharge follow-up conversations in heart failure or joint replacement service lines, and centralizing the AI partnership decision at the CMIO or board level. These moves do not require academic-medical-center scale to deliver measurable gains.

How is conversational AI different from a healthcare chatbot?

Conversational AI is different from a healthcare chatbot in that it follows up, probes, and captures the reasoning behind a patient's response rather than routing to pre-scripted answers. A traditional healthcare chatbot answers FAQs or routes to forms; a conversational AI patient-intake or follow-up agent treats the exchange as an interview, surfacing context that forms and chatbots both miss. The distinction matters most in discharge follow-up and patient experience, where the high-value answers are messy and qualified.

Conclusion: Cleveland Clinic's AI strategy points to where the next investment should land

Cleveland Clinic's AI strategy — anchored by IBM, Microsoft, G42, and Epic, executed across 23 hospitals and 13.7 million annual outpatient visits — is the reference architecture for US academic medical centers and integrated delivery networks in 2026. The clinical AI layer is well-funded, deeply partnered, and increasingly built into the workflow. The experience AI layer is thinner, especially in the discharge and follow-up window where readmission risk and patient-experience scores are actually decided.

For health system leaders benchmarking against Cleveland Clinic, the practical takeaway is to extend the same platform thinking to the patient-experience layer that has already been applied to the clinical layer. The infrastructure — cloud, EHR, partnership posture — is largely in place. The shift required is editorial: replace the forms, surveys, and outbound calls that dominate discharge and follow-up with conversational AI that captures the patient's voice in their own words. That is the lane Perspective AI is built for, and it is the under-deployed half of Cleveland Clinic's playbook that the next generation of health-system AI strategy needs to fill.

More articles on Intelligent Intake

Branch Insurance AI: Bundled Policies and Conversational Onboarding

Intelligent Intake · 12 min read

Cover Genius's Embedded Insurance AI: A 2026 Case Study

Intelligent Intake · 13 min read

Farmers Insurance AI Strategy: Auto, Home, and the Conversational Future

Intelligent Intake · 12 min read

Latham & Watkins AI Adoption: How BigLaw Is Deploying Generative AI

Intelligent Intake · 13 min read

LegalZoom AI Strategy: From DIY Forms to Conversational Legal Help

Intelligent Intake · 15 min read

Liberty Mutual's AI Strategy: How a Top-Five Carrier Is Modernizing Customer Experience

Intelligent Intake · 12 min read