•11 min read

HubSpot AI Customer Research: How a $30B CRM Leader Talks to Customers

TL;DR

HubSpot built its $30B+ market cap on a contrarian bet: SMBs deserve enterprise-grade software, and you only earn that trust by listening relentlessly. Today the company runs customer research across five product Hubs, 200,000+ customers, and tens of thousands of partners. The model is federated — embedded researchers inside each Hub, a shared research ops layer, and continuous in-product feedback. Breeze AI has quietly become the most important shift: instead of relying on scheduled interviews and surveys, HubSpot now treats every Breeze conversation, every Copilot question, and every Agent task as a research signal. This post breaks down how the operation works, what changed when AI entered the loop, and which parts of the playbook actually transfer to smaller SaaS teams.

What is HubSpot's approach to customer research?

HubSpot's approach to customer research is a federated, always-on system that combines embedded researchers inside each product Hub, in-product feedback at every meaningful surface, a shared insights repository, and a layer of AI assistants (Breeze) that turn customer conversations into structured signal. It optimizes for breadth — many small inputs from many customer segments — over depth-only research, and it treats research as a continuous operating cadence rather than a project.

The contrast with how most CRM vendors run research is sharp. Legacy enterprise CRM platforms tend to centralize research inside a corporate UX team, schedule it around major releases, and weight Fortune 500 advisory boards heavily. HubSpot weights the long tail. A 4-person agency in Lisbon using Marketing Hub Starter shows up in the same research repository as a public mid-market company on the Customer Platform tier. That choice is downstream of HubSpot's go-to-market: SMB-first, product-led, transparent. Research has to mirror the customer base, not the revenue curve.

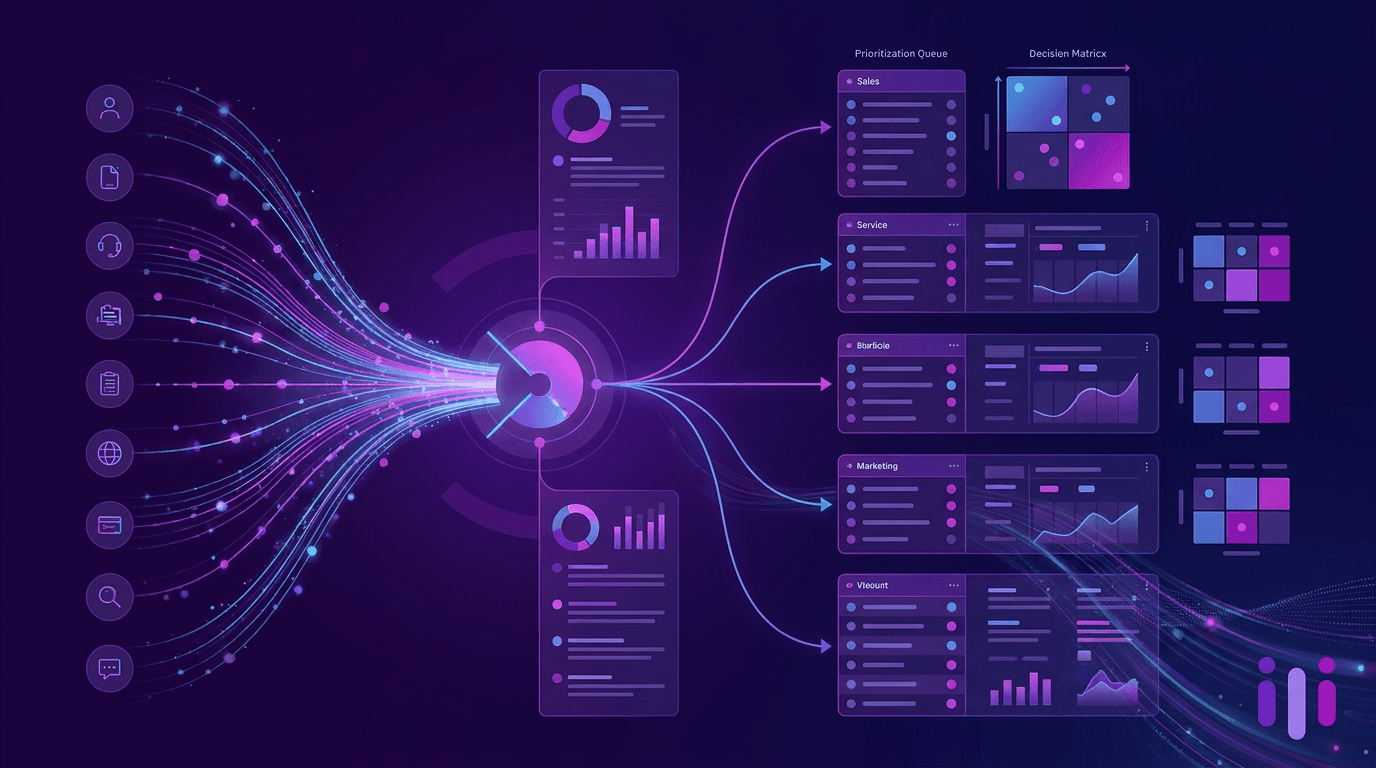

The HubSpot customer research stack

Three layers do most of the work.

1. Embedded research squads. Each Hub has its own research function staffed against its product groups. A Sales Hub researcher might cover prospecting, sequences, calling, and the deal record; a Service Hub researcher might cover the help desk, knowledge base, and customer portal. They run generative interviews, evaluative testing, diary studies, and copilot-style sessions where customers think out loud while using new features. This is the same embedded model used by companies like Atlassian and Datadog at their scale.

2. Research operations and insights. A smaller central team owns the boring-but-load-bearing parts: recruitment panels, incentive payments, legal/privacy review, the insights repository, tagging taxonomy, and tooling contracts. Without this layer, federated research devolves into 50 PMs running 50 incompatible Notion docs.

3. Always-on customer signal. In-product NPS, CSAT after key tasks, contextual micro-surveys, feedback widgets on every Hub, the Ideas Forum, community moderation, support ticket clustering, win/loss interview transcripts, and now Breeze conversation logs. None of these are "research" in the classical interview-based sense, but together they produce more behavioral evidence per week than any quarterly research roadmap could.

The tooling stack has shifted heavily toward AI-native discovery platforms over the last 18 months. HubSpot uses a mix of incumbents (FullStory, Pendo, Qualtrics components) and newer entrants — many of which appear on every PM's shortlist of AI product feedback tools and continuous discovery tools.

How they research across 5 product lines

Marketing Hub, Sales Hub, Service Hub, Content Hub (formerly CMS Hub), and Operations Hub each have their own buyer, user, jobs-to-be-done, and success metric. Marketing Hub buyers care about MQL volume and pipeline contribution. Service Hub buyers care about CSAT and ticket deflection. Content Hub buyers care about page performance and developer velocity. Operations Hub buyers — often RevOps leaders — care about data hygiene and system reliability.

A single shared customer research function can't optimize for all five. So HubSpot does three things at once.

It treats every Hub like its own product company. Each has its own roadmap, GM, PM org, design and research talent, and OKRs. Research priorities inside Sales Hub are negotiated by the Sales Hub leadership team, not by a corporate research VP. This avoids the failure mode where a centralized team produces beautifully written reports nobody acts on.

It enforces shared infrastructure. Recruitment, repositories, panels, and feedback widgets are common. So when a Service Hub PM wants to know how customers respond to AI-generated reply drafts, they can search transcripts from related Sales Hub copilot studies in minutes. The insights aren't siloed even when the squads are.

It defines a cross-Hub research agenda each year. These are the "platform bets" — things that only make sense if you look across Hubs. Examples: the smart CRM contact record, Breeze AI, the unified customer platform vision, pricing/packaging changes. These get a dedicated cross-functional research swarm.

If you want a comparable model in a different category, look at how Notion runs research across docs, projects, wikis, and AI — same federated logic, smaller scale.

What Breeze AI changed about feedback collection

Breeze is HubSpot's AI surface area: Breeze Copilot inside the product, Breeze Agents for prospecting / content / customer support / knowledge base / social, and Breeze Intelligence for enriched data and buyer intent. From the outside it looks like a product launch. From the research team's perspective it's an instrumentation shift.

Three changes matter.

Conversations replaced clicks as the dominant signal. Before Breeze, the richest behavioral data was clickstream — what the user clicked, when, in what order. Clickstream is fast to instrument but semantically thin. You see that someone abandoned a workflow; you don't know why. With Breeze Copilot, customers literally type their intent: "draft a follow-up email for the deals I haven't touched in 14 days," "summarize this thread for my manager," "why is this report showing zero?" Every prompt is a jobs-to-be-done statement in the customer's own words.

Failure cases became free. When a Breeze Agent can't complete a task, that failure is logged, tagged, and routable to the relevant Hub team. Historically you'd need a usability study to discover that a Sales Hub workflow confuses mid-market admins. Now the AI fails in production, with full context, in front of thousands of customers per week, and the data goes straight into the research repository.

Onboarding research collapsed into the product. New customer activation used to require scheduled interviews, recorded onboarding sessions, and survey follow-ups — the same pattern detailed in our Stripe onboarding philosophy breakdown. With Breeze, the onboarding assistant carries the conversation, and the conversation transcript is the research artifact. PMs review aggregated Breeze conversation themes weekly. The cycle time from "we don't understand what's blocking new admins" to "here's the top five blockers ranked by frequency" went from quarters to days.

There's a quieter implication. If conversation logs are research data, the research team's main craft shifts from running interviews to designing the conversational surfaces that produce good data. That is a different skill set, and HubSpot is staffing for it — recent hires on the research side increasingly come from conversation design, computational linguistics, and applied AI backgrounds, not just traditional UX research.

Lessons for multi-product SaaS companies

You don't need 200,000 customers to use the patterns underneath HubSpot's playbook. Six are portable.

1. Federate research before you centralize it. If you have more than one product line, embed researchers and PMs in each, then add a thin ops layer on top. Centralized-only research teams become bottlenecks the moment you ship a second product.

2. Make in-product feedback non-optional. Every Hub has feedback affordances on every meaningful surface. The cost is some UI clutter; the benefit is that you never have to "go do research" because research is happening continuously. Most SaaS teams under-instrument this dramatically.

3. One repository, one taxonomy. Federated research only compounds if findings are searchable across teams. Pick a repository, enforce tagging, and audit it quarterly. This is unsexy and load-bearing.

4. Treat AI conversations as research data from day one. If you're shipping any AI assistant or copilot, the transcripts are gold. Build the pipeline that routes them into your insights repository before you ship the feature, not after.

5. Separate the buyer from the user, especially across Hubs. HubSpot's research distinguishes between the VP Sales who buys Sales Hub and the SDR who uses it. The same is true for Marketing Hub (CMO vs. marketing ops), Service Hub (VP Support vs. agent), and Content Hub (Head of Web vs. developer). If your research only talks to one side, your roadmap will be wrong half the time.

6. Cross-product research is a separate function. Platform bets — pricing, identity, AI, data model — need their own research swarm that doesn't report to any single Hub. Otherwise no Hub will fund the work, and the platform bets are usually where multi-product SaaS companies win or lose the next five years.

The thread connecting all of these is operating cadence. HubSpot doesn't out-research smaller SaaS companies because they have more researchers. They out-research smaller SaaS companies because research is a weekly operating rhythm with infrastructure behind it, not a project that runs ahead of every major release.

Conclusion

HubSpot's customer research operation looks complicated from the outside — five Hubs, hundreds of researchers and PMs, embedded squads, federated ops, AI assistants spinning off transcripts. The internal logic is simple. Listen to a lot of customers, continuously, with shared infrastructure, and let the product surfaces themselves do most of the listening.

Breeze AI is the inflection point. Conversational interfaces produce a kind of research data that scheduled interviews and surveys can't match: in-context, in-customer's-own-words, at production volume, including failure cases. The companies that recognize this early — and treat their AI surfaces as instrumentation, not just features — will compound a research advantage that's very hard to copy after the fact.

For most SaaS teams, the takeaway isn't to rebuild HubSpot's org chart. It's to install the operating cadence, instrument every customer-facing AI surface as a research source, and stop treating customer research as a thing the research team owns alone.

Frequently Asked Questions

How does HubSpot conduct customer research across multiple product lines?

HubSpot pairs each product Hub (Marketing, Sales, Service, Content/CMS, Operations) with embedded researchers, PMs, and designers who run continuous interviews, in-product feedback loops, and quantitative panels. A central research ops team standardizes recruitment, repositories, and tagging so insights from one Hub can be searched and reused by the others. Cross-Hub platform bets (like Breeze AI or pricing changes) get a separate research swarm that doesn't sit inside any single Hub.

What is HubSpot Breeze AI?

Breeze is HubSpot's umbrella for AI features across the CRM platform. It includes Breeze Copilot (an in-product assistant), Breeze Agents for prospecting, content, customer support, knowledge base, and social media, and Breeze Intelligence for enriched company and contact data. For the research team it has a second life as an instrumentation layer — every Breeze conversation produces structured signal about what customers ask for, where they get stuck, and which tasks they want automated.

How big is HubSpot's research team?

HubSpot has not published exact headcount, but public job postings, conference talks, and LinkedIn data suggest a user research and insights org in the low hundreds, distributed across product groups in Cambridge, Dublin, Singapore, and remote. It is structured as embedded squads inside each Hub rather than a single centralized team, with a smaller research ops and strategy core that owns shared infrastructure.

How does HubSpot prioritize roadmap across Marketing, Sales, and Service Hubs?

Priorities come from a mix of inputs: revenue signal by Hub, NPS and CSAT by persona, win/loss interviews, in-product feedback, support ticket clustering, and competitive intelligence. Leadership sets cross-Hub bets each year — currently Breeze AI and the unified customer platform — and Hub GMs translate those bets into quarterly themes their PMs validate with customers before any major build. Each Hub has its own GM, P&L, and research function, so most decisions are negotiated inside the Hub rather than imposed from a corporate roadmap.

Can SMB-focused SaaS companies copy HubSpot's research playbook?

Partly. The federated structure assumes you already have multiple product lines and a research-mature culture, so smaller companies should not start there. What SMB SaaS companies can copy immediately is the operating cadence: always-on feedback in product, weekly customer conversations per PM, a single searchable insights repository, and AI assistants that turn passive support and onboarding chats into research data without adding manual interview load. Those four moves capture most of the value at a fraction of the headcount.

More articles on AI Customer Interviews & Research

Atlassian AI Customer Discovery: Behind the Research Engine at Jira, Confluence, and Loom

AI Customer Interviews & Research · 12 min read

Duolingo AI Customer Research Strategy 2026: How a Public Edtech Giant Listens at Billion-User Scale

AI Customer Interviews & Research · 11 min read

Linear's AI Customer Feedback Strategy: How They Build the Roadmap From Real Conversations

AI Customer Interviews & Research · 11 min read

Loom's AI Customer Interviews Strategy: How an Async-First Company Runs Async Research

AI Customer Interviews & Research · 10 min read

The 2026 AI Customer Interview Report: What 500 Hours of AI-Moderated Sessions Revealed

AI Customer Interviews & Research · 13 min read

Anthropic Customer Research at Scale: How Claude's Maker Learns from Enterprise AI Buyers

AI Customer Interviews & Research · 15 min read